Claude's ID Check Stirs Privacy Fears and Account Lockouts

AI Giant Tightens Security

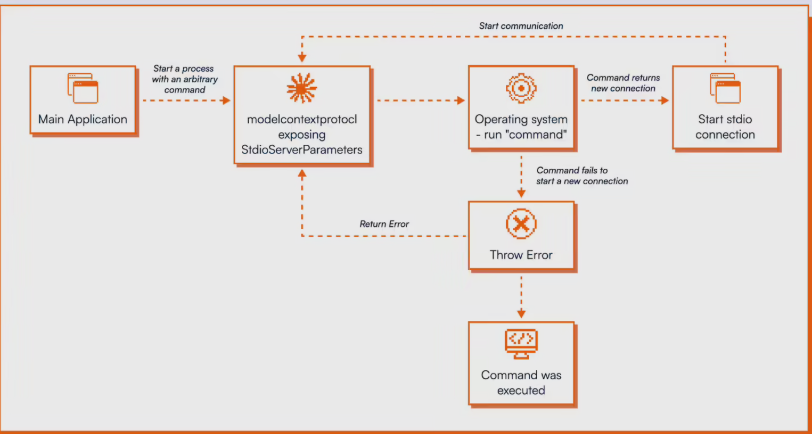

Anthropic has rolled out a controversial identity verification system for its Claude AI, requiring users to submit real-time photographs holding physical government documents like passports or driver's licenses. Unlike typical online checks, the system rejects digital copies or scans, forcing users to physically pose with their IDs. The verification process, handled by third-party firm Persona, typically completes within five minutes.

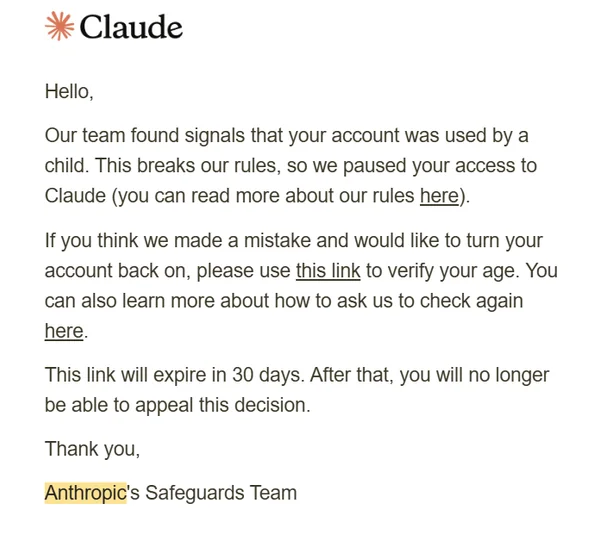

Verification or Suspension Warning?

What began as a security measure has users worried they're walking into potential account terminations. Claude's FAQ clearly lists reasons for suspension: repeated policy violations, accessing from unsupported regions, terms of service breaches, and notably - being under 18.

Privacy concerns compound the issue. Persona's policy reveals data may be shared with 17 subcontractors for "anti-fraud improvements." This broad data-sharing approach leaves many wondering exactly who can access their sensitive identity documents.

The Teen Developer Dilemma

The age restriction hit particularly hard in the developer community. One user, llm_nerd, shared how his 15-year-old son - a working game developer earning more than his father - lost access to Claude Max despite paying for the service. Anthropic's refund notice stated simply: "We detected that your account was used by a child."

Ironically, when questioned about the policy, Claude's own AI model Opus4.6 called static ID photos the "weakest link" in security, suggesting the company already employs robust verification through payment methods, behavior monitoring, and content analysis.

Industry Standards Questioned

While OpenAI and Gemini set minimum ages at 13, Anthropic's stricter 18+ policy stands out. Some users joke this creates perverse incentives - why pay when pretending to be a minor might grant free access? The debate continues as users weigh security against accessibility, especially for young tech talents caught in the crossfire.

Key Points:

- 📸 Live ID Scans Required: Claude demands real-time photos with physical IDs, raising privacy concerns

- 🔒 Verification Backlash: Many users report account suspensions following compliance

- 👦 Age Limit Controversy: 18+ restriction excludes teen developers using the tool professionally

- 🤖 AI's Own Critique: Claude's model questions the effectiveness of static ID verification