AntBaiLing Unveils Efficient AI Model Ring-mini-sparse-2.0-exp

AntBaiLing Releases Breakthrough AI Model for Efficient Long-Sequence Processing

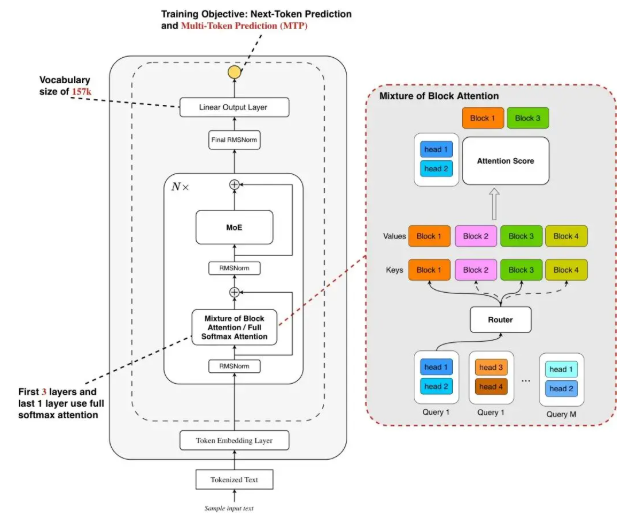

The AntBaiLing research team has announced the open-source release of Ring-mini-sparse-2.0-exp, a next-generation efficient inference model built upon the Ling2.0 architecture. This innovative model specifically targets challenges in long sequence decoding through its advanced sparse attention mechanisms.

Technical Innovations

The architecture combines two groundbreaking approaches:

- High sparsity ratio Mixture of Experts (MoE) structure

- Novel sparse attention mechanism

According to team reports, deep optimization between the architecture and inference framework has yielded remarkable performance gains:

- Nearly 3× throughput increase compared to previous Ring-mini-2.0 model

- Maintains state-of-the-art (SOTA) performance across multiple challenging reasoning benchmarks

The model demonstrates exceptional capabilities in:

- Context processing

- Efficient reasoning

- Lightweight deployment scenarios

Architectural Breakthroughs

The Ling2.0Sparse architecture addresses two critical trends in large language model development:

- Context length expansion

- Test-time expansion

Key technical implementations include:

- Mixture of Block Attention (MoBA) inspired design

- Block-wise sparse attention that divides input Key/Value into segments

- Top-k block selection on head dimension

- Shared selection results across query heads within groups (Grouped Query Attention)

The team reports these innovations significantly reduce:

- Computational costs (through selective softmax computation)

- I/O overhead (via shared block selection)

The model is now available on GitHub for community access and research.

Key Points

🌟 Performance: Delivers triple throughput in long-sequence reasoning tasks while maintaining accuracy

🔍 Innovation: Pioneering sparse attention mechanism balances efficiency and processing power

📥 Accessibility: Open-source availability fosters community adoption and further development