AI Architecture Debate: Mistral Claims Influence Over DeepSeek's Design

AI Architecture Debate Heats Up Between Mistral and DeepSeek

The AI world is buzzing with an architectural dispute that reads like tech industry drama. Arthur Mensch, CEO of French AI company Mistral (often called Europe's answer to OpenAI), dropped a bombshell in a recent interview: China's powerful open-source model DeepSeek-V3 allegedly builds upon Mistral's architectural foundations.

The Claim That Started It All

Mensch pointed specifically to Mistral's early 2024 release of their sparse mixture-of-experts (MoE) model as the supposed inspiration for DeepSeek's subsequent versions. "They adopted the same architecture," he stated matter-of-factly.

The tech community reacted swiftly - and skeptically. Developers began digging through research papers on arXiv, uncovering details that challenge Mensch's narrative.

Timing tells an interesting story: The publication dates for Mixtral's paper and DeepSeek's MoE research appeared just three days apart. This tight timeline makes establishing clear influence challenging at best.

Architectural Differences Emerge

While both systems use sparse mixture-of-experts approaches, their implementations diverge significantly:

- Mixtral focused primarily on engineering optimizations

- DeepSeek undertook deep algorithmic reconstruction

The Chinese model introduced novel concepts like "fine-grained expert segmentation" and "shared experts" mechanisms - fundamentally different from Mistral's simpler flat expert design.

Plot Twist: Who Inspired Whom?

The controversy took an unexpected turn when technical experts highlighted what appears to be reverse influence. Netizens noticed striking similarities between:

- Mistral3Large (late 2025 release)

- Innovative technologies like MLA used in DeepSeek-V3

The observation led some to joke about Mistral attempting to "rewrite history" amid waning technological leadership in MoE architecture development.

Open Source Philosophy vs Competitive Reality

The debate touches on fundamental questions about innovation in open-source environments. Mensch himself acknowledged earlier in his interview that open-source progress often means "continuous improvement based on each other's work."

Yet competition remains fierce:

- DeepSeek reportedly prepares a major new model release timed for Chinese New Year 2026

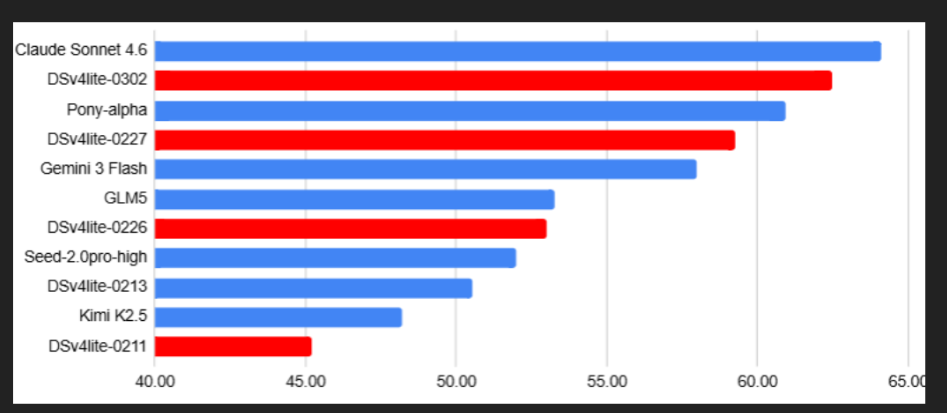

- Mistral continues updating its Devstral family, vying for top position in open-source programming intelligence

The AI community watches closely as these developments unfold, proving that even in collaborative spaces, attribution matters.

Key Points:

- Timing Questionable: Papers published just days apart complicate influence claims

- Design Differences: Core architectural approaches show significant divergence

- Potential Reversal: Evidence suggests later Mistral models may have borrowed from DeepSeek innovations

- Industry Impact: Competition heats up as both companies prepare new releases