DeepSeek V4 Lite: The Compact AI Model Making Waves

DeepSeek V4 Lite: Small Size, Big Impact

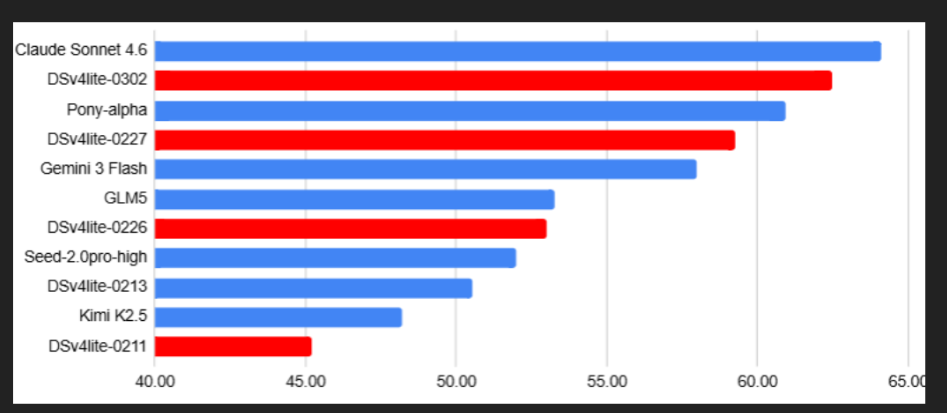

The AI world has a new dark horse contender. DeepSeek V4 Lite, initially positioned as a 'preliminary' version of the upcoming DeepSeek V4 model, has evolved into something far more impressive than anyone anticipated. What began as a specialized tool for processing long documents (up to 1 million tokens) has transformed into a surprisingly capable general-purpose AI through quiet but significant updates.

From Underdog to Frontrunner

When first released in mid-February, V4 Lite attracted modest attention for its context-handling abilities but little else. That changed dramatically after late February's updates. Tech experts testing the model began reporting performance that rivaled much larger international models - particularly in programming tasks and creative applications where Chinese models traditionally lagged.

"The improvements in code generation and front-end development capabilities were immediately noticeable," shared one developer who wished to remain anonymous. "But what really surprised me was how natural its aesthetic judgments became - it went from producing serviceable designs to genuinely polished ones almost overnight."

Punching Above Its Weight

At approximately 200 billion parameters, V4 Lite operates with significantly fewer resources than industry leaders like Claude 3.5 Sonnet or GPT-4 Turbo (estimated at over 1 trillion parameters each). Yet benchmark tests suggest it now delivers comparable results in many key areas - an achievement that's rewriting expectations about what 'small' models can do.

Industry analysts point to DeepSeek's technical innovations as the likely explanation. Rather than simply scaling up like most competitors, the company appears to have found more efficient ways to train and structure their model - though they remain tight-lipped about specifics.

What This Means for AI Development

The implications extend beyond one company's success. If these performance gains hold up under scrutiny:

- They challenge the prevailing assumption that bigger always means better in AI

- They demonstrate Chinese tech firms can innovate rather than just follow Western leaders

- They suggest we may be entering an era of more efficient, specialized models rather than monolithic giants

Perhaps most excitingly, if this is what the 'Lite' version can do, expectations are running high for the full DeepSeek V4 release later this year.

Key Points:

- Compact Powerhouse: Delivers top-tier performance with just ~200B parameters

- Stealth Upgrades: Recent updates dramatically improved coding and creative abilities

- New Benchmark: Now considered among China's most capable AI models

- Future Promise: Full V4 version could significantly impact global AI landscape