Ant Group Dominates AI Detection Challenge with Dual Wins

Ant Group Breaks New Ground in AI-Generated Content Detection

In a striking demonstration of technological prowess, Ant Group claimed top honors at this year's CVPR NTIRE Image Detection Challenge, winning both the "Robustness Sample Testing" and "Face Enhancement Anomaly Detection" tracks. The achievement signals a major advancement in detecting AI-generated content - a growing concern as synthetic media becomes increasingly sophisticated.

The Deepfake Detection Arms Race

As AI-generated images and videos reach near-perfect realism, the challenge of distinguishing authentic content from fabrications has become critical. "Current detection methods often fail when faced with real-world conditions or the rapid evolution of multimodal models," explains a team spokesperson. The CVPR challenge specifically tested models against these pain points, requiring high accuracy even with unknown generation methods and degraded image quality.

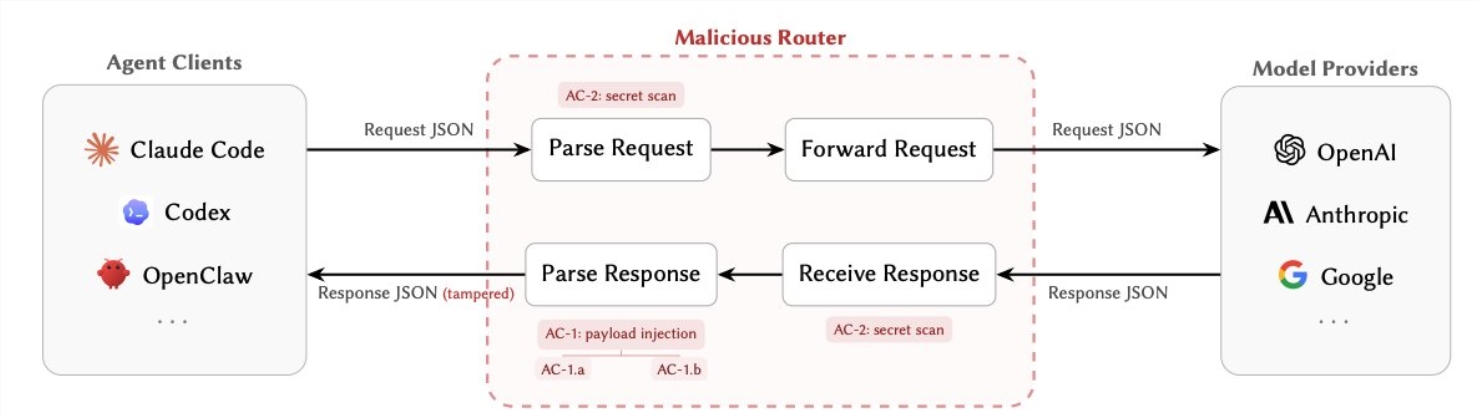

Ant Group's solution builds on their twenty years of payment security experience, now applied to AI threats. Their DINOv3-based detection framework represents a quantum leap from laboratory conditions to practical applications. Imagine giving detection models "two eyes" - one for fine details and another for broader patterns - creating a more comprehensive analysis.

Inside the Winning Approach

For the robustness competition, the team compiled an enormous training dataset spanning millions of samples from leading open-source collections. They went beyond clean laboratory conditions, simulating real-world distortions like social media compression and camera rephotography. This rigorous preparation paid off when their dual-stream architecture outperformed competitors in challenging conditions.

Perhaps more impressive is their "Locate-Then-Examine" method, which doesn't just flag fake content but pinpoints exactly where manipulations occur. "It's like having a forensic analyst inside the algorithm," one judge remarked. The team has generously open-sourced their detection tools, inviting broader collaboration against deepfake threats.

Real-World Protections

The face detection victory holds particular significance for financial security. Ant International's technology can spot subtle anomalies in identification documents, crucial for preventing fraud in cross-border transactions and account openings. As synthetic identity fraud grows more sophisticated, these detection capabilities form a vital defense layer.

CVPR, alongside ICCV and ECCV, stands as one of computer vision's most prestigious conferences. This year's challenge drew over 500 global teams, making Ant Group's dual victory especially noteworthy. Their success demonstrates how security expertise from one domain can powerfully address emerging challenges in another.

Key Points:

- Dual Challenge Wins: Ant Group dominated both "Robustness Testing" and "Face Anomaly Detection" tracks

- Real-World Ready: Technology tested against practical scenarios like social media distortions

- Explainable AI: New "Locate-Then-Examine" method shows where fakes contain flaws

- Open Collaboration: Comprehensive detection tools shared via GitHub

- Financial Applications: Critical for securing digital payments and identity verification