Ant Group and Tsinghua Unveil Open-Source Security Shield for AI Agents

A New Guardian for AI Agents

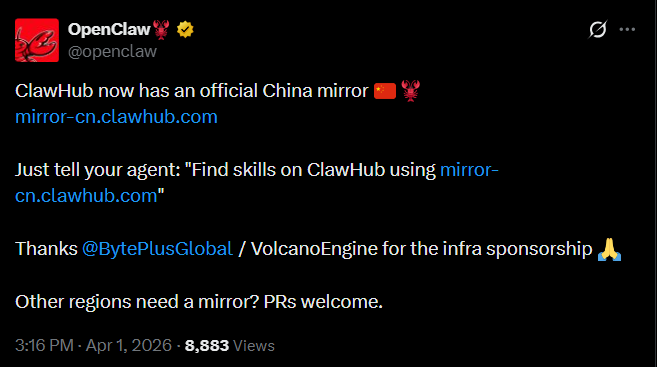

In a move that could reshape how we secure autonomous systems, Ant Group's AI Security Lab and Tsinghua University have open-sourced ClawAegis - a comprehensive security solution designed specifically for OpenClaw-type intelligent agents. Released on April 2, this plugin marks the first attempt to provide end-to-end protection for AI agents throughout their operational lifespan.

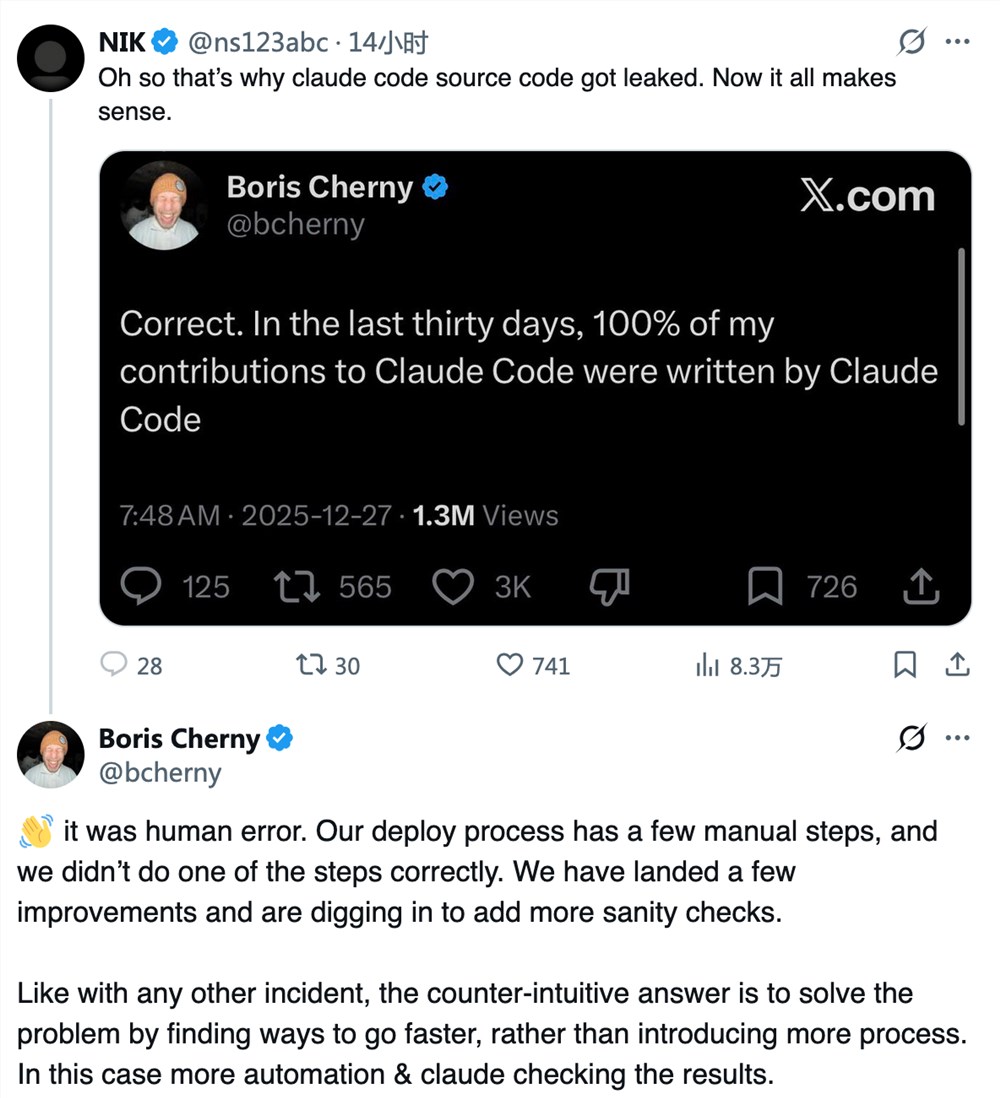

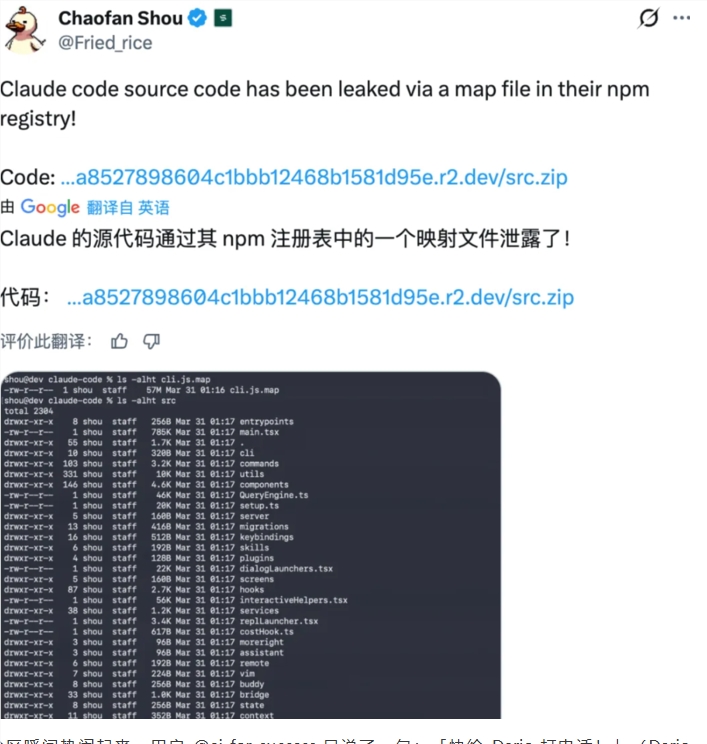

The Growing Pains of Agent Technology

As frameworks like OpenClaw gain traction among developers, their security vulnerabilities are coming into sharp focus. These autonomous systems face threats at every turn:

- Skill poisoning that corrupts their capabilities

- Memory contamination altering decision-making processes

- Malicious guidance leading to unintended actions

- Resource exhaustion attacks that cripple performance

"We're seeing threats emerge across five critical stages," explains a project lead who asked to remain anonymous. "From initialization through execution, these agents need constant protection without compromising their functionality."

How ClawAegis Works Its Magic

The new security plugin operates like a digital immune system for AI agents:

- Multi-layered defense: Actively monitors all operational phases

- Real-time intervention: Blocks threats like unauthorized access or data theft mid-execution

- Seamless integration: Runs as a lightweight component within existing OpenClaw frameworks

- Configurable protection: Allows security teams to customize responses to emerging threats

- Transparent operation: Maintains performance while safeguarding sensitive assets

What sets ClawAegis apart is its ability to dynamically activate protections precisely when needed, rather than running constant heavy scans that slow down operations.

The Road Ahead for AI Security

This release builds on Ant Group's recent efforts to patch vulnerabilities in OpenClaw implementations. The research teams plan continuous updates, working with the open-source community to enhance ClawAegis's capabilities.

The ultimate goal? Creating an ecosystem where intelligent agents can operate with verifiable trust - their actions controllable and traceable at every step.

Key Points:

- First comprehensive security solution for OpenClaw-type agents

- Addresses risks across initialization, input processing, reasoning, decision-making and execution

- Lightweight design maintains system performance

- Configurable protections adapt to evolving threats

- Part of broader push toward trustworthy autonomous systems