Alibaba's Qwen3-VL Outperforms Rivals in Spatial Reasoning Tests

Alibaba's AI Model Breaks New Ground in Spatial Understanding

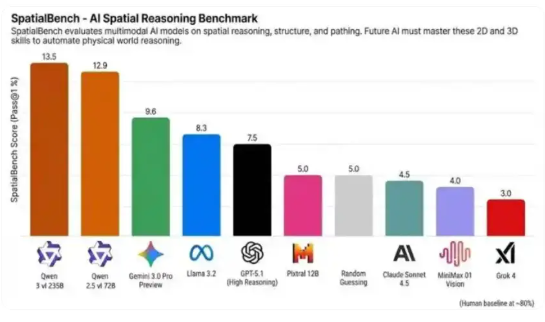

Alibaba's Qwen vision models have claimed the top spots in SpatialBench, a rigorous benchmark testing AI spatial reasoning capabilities. The newer Qwen3-VL scored an impressive 13.5 points, while its predecessor Qwen2.5-VL followed closely with 12.9 points - both significantly outperforming competing models from Google and OpenAI.

What Makes SpatialBench Special?

The SpatialBench evaluates how well AI systems handle real-world spatial challenges - from interpreting engineering diagrams to understanding molecular structures. Often called the "litmus test for embodied intelligence," it pushes models beyond simple image recognition into true spatial comprehension.

Why Qwen3-VL Stands Out

The latest version brings several groundbreaking improvements:

- Enhanced 3D Perception: By adding rotated bounding box outputs and depth estimation, the model achieves an 18% accuracy boost in cluttered environments where objects partially obscure each other.

- Sketch-to-Code Functionality: Users can now draw rough diagrams or upload short videos that the system converts directly into working Python code using OpenCV - essentially turning visual ideas into executable programs.

- Flexible Scaling Options: Available in sizes ranging from compact 2B versions up to massive 235B configurations, allowing different applications to choose their ideal balance of power and efficiency.

Practical Applications Already Underway

Alibaba Cloud reports that early implementations show promising results:

- Logistics robots using Qwen3-VL achieve spatial positioning accurate within 2 centimeters

- AR assembly systems demonstrate improved part alignment

- Smart port operations benefit from enhanced container tracking

The company plans to release an end-to-end "vision-action" model by 2026 that could give robots real-time visual coordination abilities.

Availability Timeline

The previous generation (Qwen2.5-VL) is already open source, while Qwen3-VL's code and tools should become publicly available by mid-2025 through Alibaba's forthcoming Qwen App.

Key Points:

- Alibaba's Qwen models lead in spatial reasoning benchmarks

- New features enable better 3D understanding and visual programming

- Practical deployments show centimeter-level accuracy

- Open source release planned for 2025