Google's Gemini 3 Flash Now Sees Like a Human Detective

Google's AI Learns to Examine Images Like Human Experts

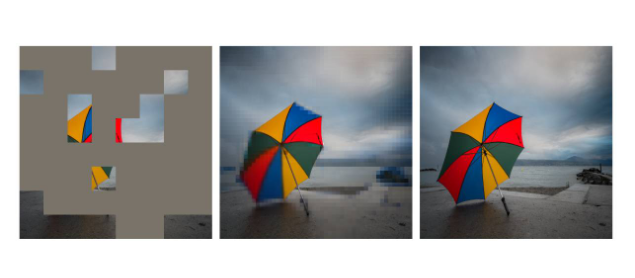

Imagine an AI that doesn't just look at pictures but actually studies them - zooming in on important details, circling relevant sections, and piecing together clues like a detective. That's exactly what Google's new Agentic Vision technology brings to its lightweight Gemini 3 Flash model.

From Glancing to Investigating

Traditional AI vision systems had a fundamental limitation: they processed entire images at once, often missing crucial details in complex scenes. Road signs became blurry smudges in the distance, intricate diagrams turned into indecipherable patterns, and small text simply disappeared.

"It was like trying to read a book by holding it at arm's length," explains Dr. Elena Rodriguez, Google's computer vision lead. "Now we've given our AI the ability to pick up that book, turn the pages, and even use a magnifying glass when needed."

The breakthrough comes from mimicking how humans examine complex visuals. When presented with a challenging image, Gemini 3 Flash:

- Creates an analysis plan

- Uses Python code to manipulate the image (cropping, rotating, annotating)

- Studies these enhanced views

- Delivers its final assessment

Practical Benefits Emerging

Early tests show 5-10% accuracy improvements on difficult visual tasks:

- Reading distant street signs

- Analyzing complex technical diagrams

- Identifying subtle patterns in medical imagery

The technology isn't just smarter - it's more transparent too. Developers can watch as the AI "shows its work" through each investigative step.

Coming Soon to Your Phone

Currently available through Google's developer platforms (Gemini AI Studio and Vertex AI), Agentic Vision will soon reach general users via:

- Thinking Mode in Gemini apps

- Mobile AI assistants

- Potentially integrated into Google Lens

The implications are vast - from helping visually impaired users navigate spaces to assisting scientists analyzing microscopic images.

Key Points:

- 🔍 Active investigation: No more passive image scanning - Gemini now explores visuals methodically

- 🛠️ Code-powered analysis: Automatically generates Python scripts to manipulate images

- 📱 Coming to consumers: Will debut in mobile assistants soon

- 🎯 Accuracy boost: Delivers measurable improvements on tough visual tasks