Xiaomi Open-Sources Multimodal AI Model MiMo-VL-7B-2508

Xiaomi Open-Sources Advanced Multimodal AI Model

Xiaomi's AI research team has publicly released its MiMo-VL-7B-2508 multimodal large language model, marking a significant contribution to the open-source AI community. The release includes both Reinforcement Learning (RL) and Supervised Fine-Tuning (SFT) versions of the model.

Breakthrough Performance Metrics

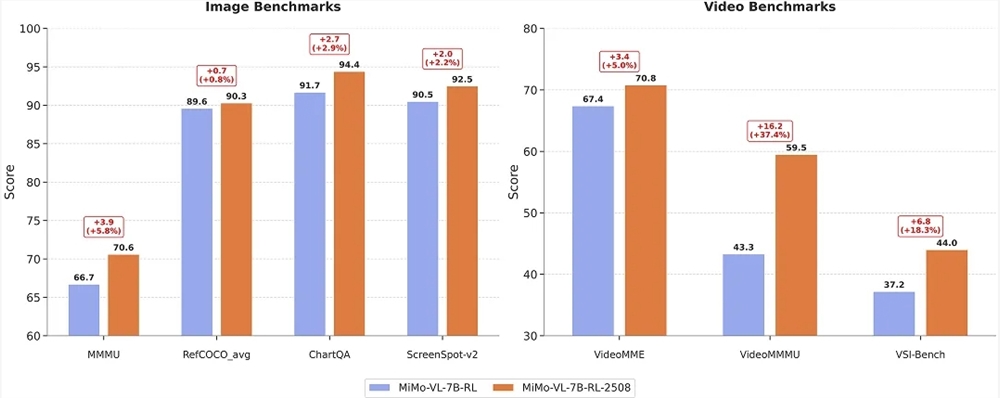

The new model demonstrates exceptional capabilities across multiple domains:

- Subject reasoning: Achieved 70+ on MMMU benchmark

- Document understanding: Scored 94.4 on ChartQA

- Graphical interface positioning: Reached 92.5 on ScreenSpot-v2

- Video understanding: Improved to 70.8 on VideoMME

Technical Enhancements

The latest iteration shows substantial improvements in:

- Reinforcement learning stability

- Supervised fine-tuning processes

- Internal VLM Arena score (increased from 1093.9 to 1131.2)

User-Centric Features

The model introduces innovative interaction modes:

- Thinking mode: Displays complete reasoning chains (100% control success rate)

- Non-thinking mode: Direct answer generation (99.84% success rate with faster responses)

Users can toggle between modes using the

/no_thinkinstruction.

Available Model Versions

MiMo-VL-7B-RL-2508

- Recommended for general use cases

- Open source repository

MiMo-VL-7B-SFT-2508

- Suitable for custom fine-tuning

- Improved RL stability over previous versions

- Open source repository

Key Points

✅ New state-of-the-art performance in four core AI capabilities

✅ Dual-mode operation optimizes for accuracy or speed

✅ Fully open-sourced with commercial-friendly licensing

✅ Enhanced stability for reinforcement learning applications