WeChat Takes Hard Line Against AI Celebrity Impersonators

WeChat Clamps Down on AI-Powered Celebrity Scams

As AI tools for face-swapping and voice cloning become frighteningly accessible, WeChat finds itself battling an epidemic of digital imposters. The platform recently revealed its aggressive campaign against accounts using these technologies to mimic celebrities - a trend that's been fueling everything from shady marketing schemes to outright fraud.

The Deepfake Dilemma

Imagine scrolling through your feed and seeing your favorite actor endorsing a questionable investment opportunity - except they never actually did. That's the disturbing reality WeChat's security teams have been confronting. Their monitoring systems uncovered accounts creating eerily accurate fake videos and audio clips featuring public figures' likenesses without consent.

These forged endorsements aren't just harmless pranks. They're frequently deployed in elaborate scams designed to manipulate fans' trust. "When someone sees their idol apparently recommending a product, their guard naturally drops," explains cybersecurity expert Li Wei (not affiliated with WeChat). "Scammers bank on that instant credibility."

Human + Machine Defense Strategy

Facing this high-tech deception, WeChat adopted a dual approach:

- Human vigilance: Expanded reporting channels encourage users to flag suspicious content

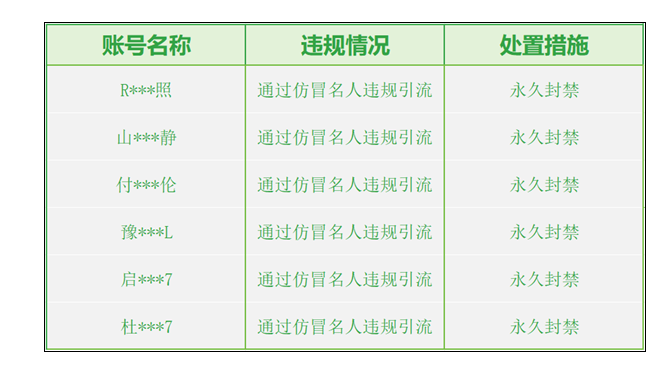

- AI detection: Upgraded algorithms now spot subtle artifacts in synthetic media that escape human eyes The results? Over 13,000 pieces of violating content scrubbed from the platform and more than 1,200 accounts suspended - some permanently banned for particularly egregious violations.

Staying Ahead of the Game

The platform acknowledges this is just the opening salvo in an ongoing arms race. "As forgery tools improve, so must our defenses," states WeChat's latest transparency report. Future upgrades will focus on:

- Faster identification of new deepfake techniques

- Streamlined processes for removing harmful content

- Stronger penalties for repeat offenders

The company also emphasizes user education: "If something seems too good - or too strange - to be true from a public figure, it probably is," warns their consumer alert.

Key Points:

- Massive enforcement: Over 1,200 accounts banned including permanent removals for worst offenders

- Focus areas: Primarily targeting financial scams and false endorsements using celebrity likenesses

- Tech arms race: Continuous improvements planned for AI detection capabilities