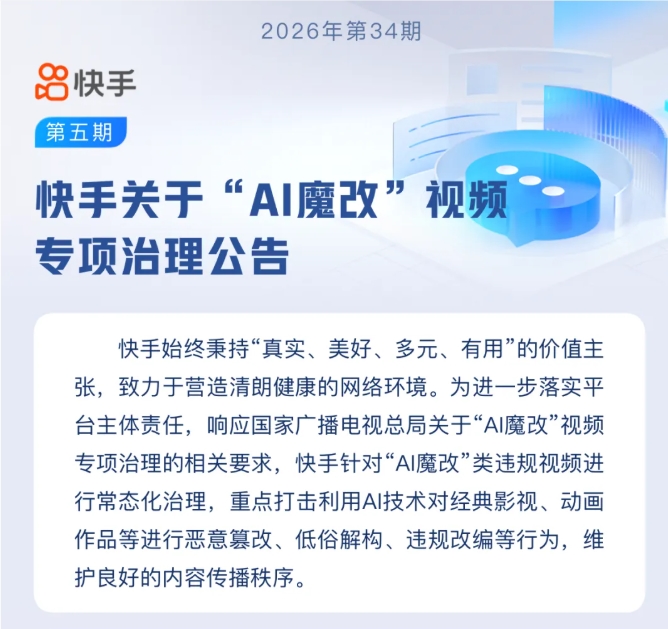

China cracks down on AI celebrity imposters in live-streaming scams

China Takes Hard Stance Against AI-Powered Celebrity Scams

The digital landscape just got tougher for fraudsters using artificial intelligence to impersonate celebrities. China's cyberspace watchdog has launched its most aggressive campaign yet against AI-generated imposters flooding live-streaming platforms.

Deepfake Deception Goes Mainstream

With generative AI tools becoming frighteningly accessible, unscrupulous marketers have found new ways to exploit public trust. Sophisticated face-swapping and voice-cloning technologies now allow anyone to digitally "become" a celebrity overnight.

"We're seeing entire networks of accounts fabricating endorsements from famous figures," explained one platform moderator who requested anonymity. "The technology has gotten so good that even careful viewers can't always spot the fakes."

The scams follow a familiar pattern: AI-generated versions of popular actors, singers or influencers appear to enthusiastically promote products ranging from skincare to kitchenware. Viewers, believing they're getting genuine recommendations, often fall victim to overpriced or counterfeit goods.

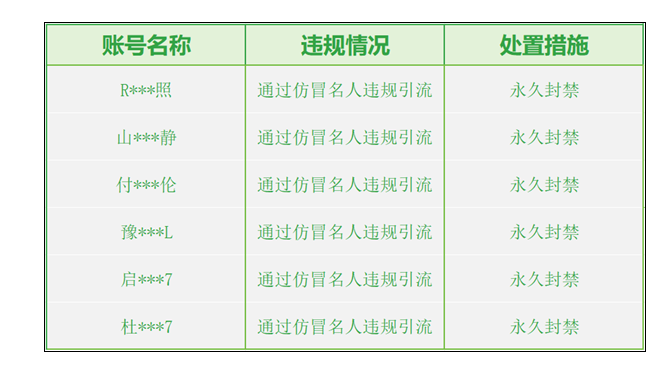

Enforcement Hits Hard

The Cyberspace Administration's recent sweep targeted notorious offenders like "Baihu Supermarket Store" and "Global Skincare Selection," accounts that built entire businesses on fabricated celebrity personas. Platforms received orders to implement stricter verification systems while purging existing violations.

The numbers tell the story:

- 11,000+ fake accounts terminated

- 8,700+ pieces of content removed

- 100% of major platforms participating in compliance efforts

"This isn't just about copyright anymore," noted digital rights attorney Li Wenjie. "These scams undermine consumer trust in entire industries while putting real celebrities' reputations at risk."

What Comes Next?

The administration vows this is only phase one. New detection algorithms are being deployed across platforms, with human review teams receiving specialized training to spot increasingly sophisticated deepfakes.

For consumers, experts offer simple advice: "If a deal seems too good to be true, or a celebrity endorsement feels out of character, it probably is," cautions tech analyst Zhang Wei. "Always verify through official channels before purchasing."

The crackdown represents China's latest move in the global struggle against AI-facilitated deception. As the technology evolves, so too must our defenses against those who would weaponize it.

Key Points:

- Deepfake crackdown: Targeting AI-generated celebrity impersonations in live-streams

- Massive scale: Over 11,000 accounts banned in initial enforcement wave

- Evolving threats: Scammers using increasingly sophisticated face/voice cloning tech

- Consumer vigilance: Experts warn shoppers to verify suspicious endorsements

- Ongoing battle: Platforms implementing new detection systems as scams evolve