Tongyi Lab's New Video Tool Makes Editing as Easy as Writing a Document

Tongyi Lab Democratizes Video Production with AI-Powered Wan2.7-Video

Imagine being able to edit videos as effortlessly as you compose an email. That's the promise behind Aliyun Tongyi Lab's latest innovation, Wan2.7-Video, which launched this week to address two persistent pain points in digital content creation: technical complexity and creative limitations.

Breaking Down Creative Barriers

The platform represents a quantum leap from conventional video editors. "We've essentially built word processing software for visual storytelling," explains a Tongyi Lab spokesperson. Users can now manipulate every element of their videos - from scene composition to character dialogue - using simple text prompts or by dragging existing media assets into the system.

What sets Wan2.7-Video apart is its multimodal understanding. Feed it text descriptions, reference images, existing video clips, or even audio cues, and the AI generates coherent visual sequences. Need your protagonist to deliver different lines? Just type the new dialogue. Want to transport your beach scene to a snowy mountain? A single command makes it happen.

Hollywood-Grade Editing at Your Fingertips

Professional filmmakers might raise an eyebrow at claims of "one-click" environment changes, but early testers confirm the tool delivers remarkable results. The background replacement feature doesn't just slap on new scenery - it intelligently adjusts lighting, shadows, and perspective to maintain visual consistency.

The editing capabilities border on magical:

- Object manipulation: Delete unwanted elements (goodbye photobombers!) or swap props without leaving artifacts

- Temporal control: Adjust pacing and transitions down to individual frames

- Style transfer: Apply cinematic filters or mimic specific directors' visual signatures

- Performance tweaking: Modify actors' expressions and movements post-production

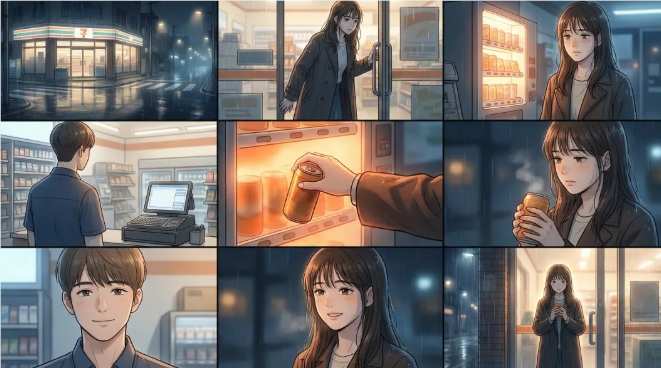

Creative Superpowers for Storytellers

Where Wan2.7-Video truly shines is in narrative flexibility. Writers can experiment with alternate plotlines by regenerating scenes instead of reshooting them. Content creators can repurpose successful sequences across multiple projects while maintaining brand consistency.

The "story continuation" feature particularly excites educators and marketers. Start with an existing video clip, then have the AI generate logical extensions - perfect for serialized content or branching narrative experiments.

Key Points:

- Intuitive interface replaces complex editing software with simple text commands

- Multimodal input accepts text, images, video clips and audio as creative starting points

- Non-destructive editing preserves original footage while enabling infinite variations

- Style replication maintains visual coherence when modifying existing content

- Real-time collaboration allows teams to work simultaneously on different scene elements