NVIDIA Bets Big on Groq Tech for New AI Chip as OpenAI Signs On

NVIDIA Doubles Down on AI Inference With Groq-Powered Chip

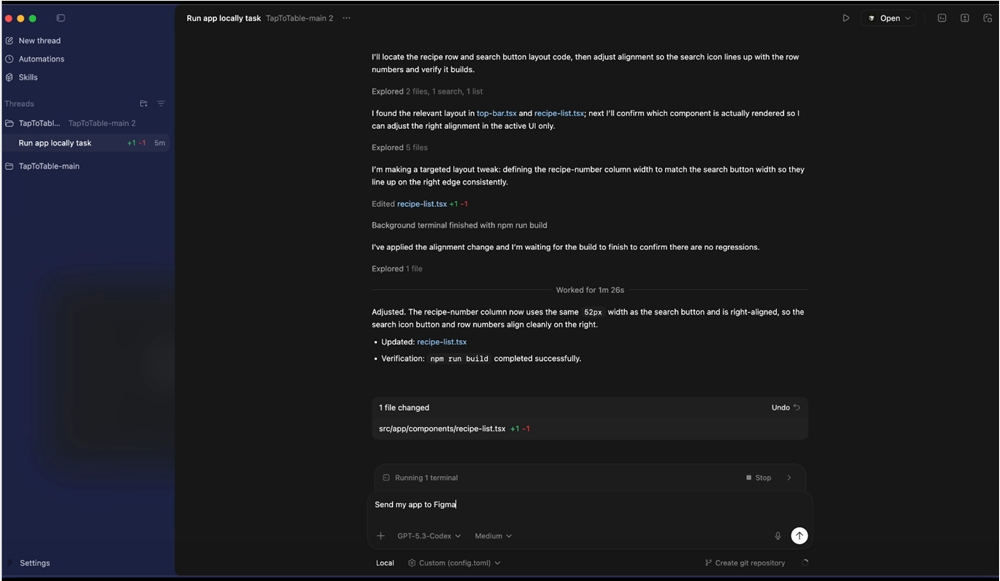

The computing giant is making strategic moves to stay ahead in the intensifying battle for AI chip supremacy. At its upcoming GTC developer conference, NVIDIA plans to introduce a specialized processor designed specifically for AI inference tasks - the critical moment when trained models actually generate responses.

What makes this launch particularly noteworthy? The chip incorporates breakthrough technology from Groq, the much-talked-about startup whose "language processing units" have demonstrated remarkable efficiency in handling AI decoding processes. NVIDIA didn't just license the tech - they reportedly paid $2 billion for key patents and brought Groq's core engineering team onboard.

Solving the Speed Bump in AI Conversations

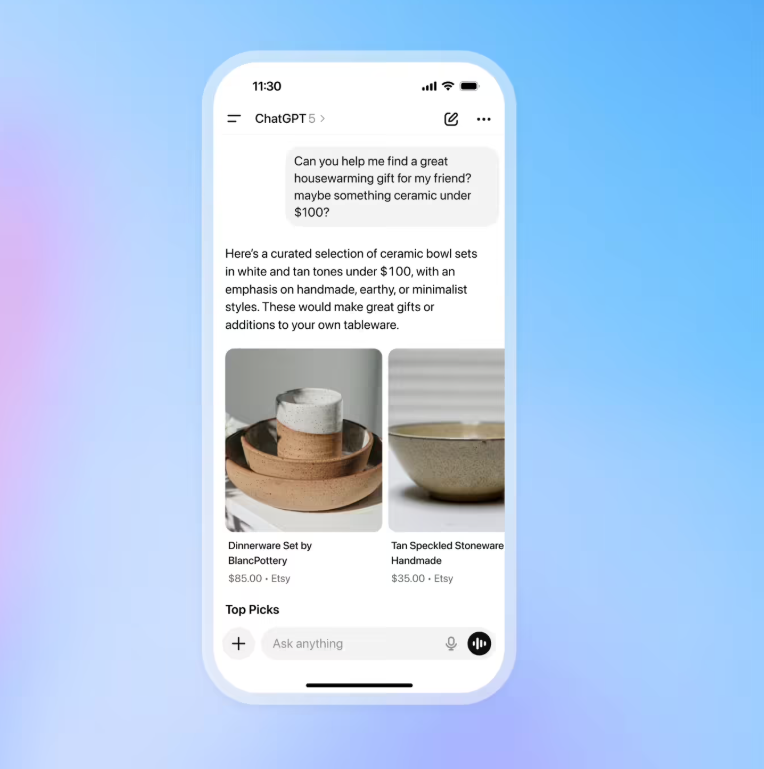

As AI assistants become ubiquitous, users increasingly notice frustrating delays in responses. Traditional GPUs weren't optimized for this real-time interaction challenge. Groq's architecture changes the game by streamlining how AI models process and generate language, potentially making conversations flow more naturally while using less energy.

"This isn't just another chip iteration," observes industry analyst Mark Chen. "NVIDIA recognized they needed outside expertise to solve specific bottlenecks in inference performance. The Groq acquisition gives them weapons no competitor currently has."

OpenAI Comes Back Into the Fold

The development scored NVIDIA a major coup with OpenAI, which had been exploring alternatives due to concerns about GPU costs and power demands. After testing prototypes of the new Groq-enhanced platform, OpenAI committed as a launch customer - a significant reversal after signing deals with startups like Cerebras.

Sources indicate OpenAI plans to use the chips to supercharge its Codex programming tool, sharpening its competitive edge against rivals like Anthropic. The endorsement from such a prominent AI developer could sway other companies weighing their hardware options.

The Chip Wars Escalate

NVIDIA's move comes as tech titans increasingly develop in-house alternatives:

- Google's TPU v5 promises 3x performance gains

- Amazon's Trainium2 targets cost-efficient model training

- Microsoft is rumored to be working on its own accelerator

The competition reflects how crucial specialized hardware has become in the AI arms race. While NVIDIA still commands an estimated 80% of the market, rivals are spending billions to carve out their share.

Key Points:

- NVIDIA integrating Groq tech into new inference-focused processor

- $2 billion investment brings key patents and engineering talent

- OpenAI returning as major customer after testing alternatives

- Chip designed specifically for faster, more efficient AI responses

- Intensifying competition as tech giants develop rival solutions