Moonshot AI and Tsinghua Team Up to Solve AI's Biggest Bottleneck

Moonshot AI and Tsinghua Crack the Code on AI Efficiency

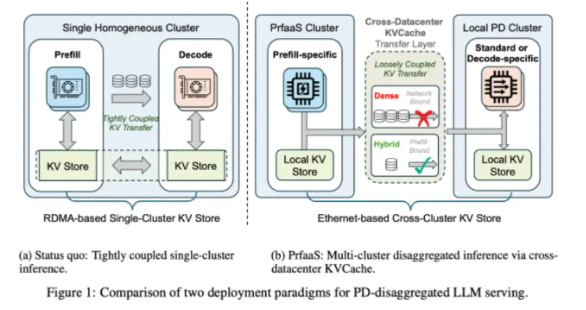

Imagine your favorite AI assistant suddenly becoming 54% faster without any hardware upgrades. That's exactly what researchers at Moonshot AI and Tsinghua University have achieved with their revolutionary new architecture called Prefill-as-a-Service (PrfaaS). This breakthrough tackles one of AI's most persistent headaches - the inefficient way current systems handle large language models.

The Problem: AI's Traffic Jam

Current AI systems face a fundamental dilemma. Processing requests involves two very different tasks:

- The Brainstorm Phase (Prefill): Where the system analyzes your entire input at once - think of it like a chef prepping all ingredients before cooking

- The Delivery Phase (Decode): Where the system generates responses word by word - similar to that chef now carefully plating each dish

The trouble comes when these two processes compete for resources on the same hardware. It's like trying to run a bakery in your kitchen while simultaneously hosting a dinner party - neither task gets what it truly needs.

The Solution: A Long-Distance Partnership

The PrfaaS architecture introduces an elegant fix:

- Specialized Teams: High-powered computing clusters handle just the initial heavy lifting (prefill)

- Efficient Handoff: The pre-processed data travels via standard networks to local servers

- Precision Timing: Smart scheduling ensures no single request holds up others

"We're essentially creating an express lane for AI processing," explains one researcher involved in the project. "The heavy thinking happens where computing power is plentiful, while responses get crafted closer to users."

Real-World Impact

The numbers speak for themselves:

- 54% more requests handled simultaneously

- Noticeably faster first responses for end users

- No more resource gridlock between computation and memory needs

The implications extend beyond just speed. This approach could significantly reduce infrastructure costs for companies deploying large AI models, potentially making powerful AI tools more accessible.

What's Next?

While still in early stages, PrfaaS represents more than just a technical tweak - it suggests a new paradigm for how we might distribute AI workloads geographically. As one team member put it: "This could be the beginning of truly global-scale AI deployment."

The collaboration continues to refine the technology, with industry observers keenly watching how this innovation might reshape our AI-powered future.

Key Points:

- Problem Solved: Separates compute-intensive and memory-intensive AI tasks

- How It Works: Uses specialized clusters for initial processing then efficient data transfer

- Benefits: 54% throughput boost, reduced latency, better resource use

- Big Picture: Could enable more efficient global AI deployment