Mistral AI's Small4: A Versatile Powerhouse for Developers

Mistral AI Raises the Bar with Small4 Release

In the competitive world of open-source AI models, European lab Mistral AI continues to impress with its rapid advancements. Their newest release, Small4, represents a major milestone - the first truly versatile large language model that doesn't force developers to choose between specialized capabilities.

Technical Breakthroughs

Small4 introduces several innovations that set it apart:

- Efficient Architecture: Using a Mixture of Experts (MoE) design with 119B total parameters (only 6B active at any time), it delivers top performance without excessive computational costs.

- Expanded Context: The 256k token window means it can digest entire technical manuals or large codebases in one go.

- Dual Modes: Developers can toggle between quick responses for simple queries and deep reasoning for complex problems.

What makes this release particularly exciting is its open-source nature under the Apache 2.0 license - a gift to the developer community that contrasts with many proprietary alternatives.

Performance That Speaks Volumes

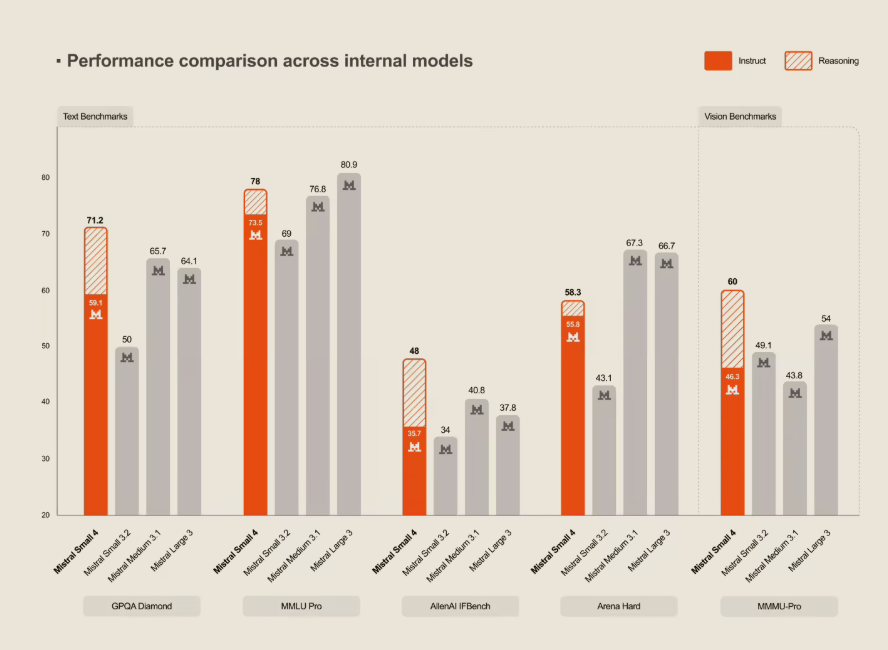

Benchmark tests reveal Small4 isn't just versatile - it's powerful. Compared to its predecessor:

- Response times dropped by 40% in latency-optimized mode

- Throughput tripled in optimized configurations

The model holds its own against industry leaders too, matching OpenAI's GPT-OSS120B in core assessments while remaining fully open-source.

Hardware Considerations

To run Small4 effectively, Mistral recommends:

- Minimum: 4× HGX H100 or 1× DGX B200 systems

- Optimal: 4× HGX H200 or 2× DGX B200 configurations

These requirements position Small4 as accessible to serious developers while still pushing hardware boundaries.

Key Points:

- First true all-rounder combining reasoning, multimodal, and programming capabilities

- MoE architecture balances performance with efficiency (119B total/6B active params)

- Massive 256k context handles complex technical materials with ease

- Open-source availability under Apache 2.0 license fosters community development

- Competitive performance against proprietary models like GPT-OSS120B