Microsoft Sounds Alarm: OpenClaw AI Poses Serious Security Risks

Microsoft Flags Critical Vulnerabilities in OpenClaw AI Assistant

In a move that's sending shockwaves through the tech community, Microsoft has sounded the alarm about serious security flaws in its OpenClaw artificial intelligence assistant. The company now advises against running the tool on standard workstations, urging organizations to confine it to fully isolated environments.

Why OpenClaw Raises Red Flags

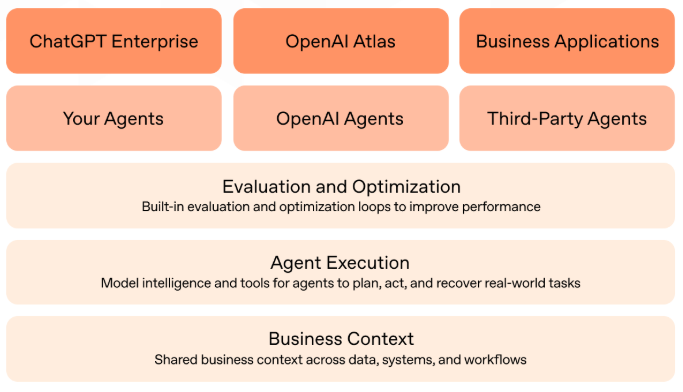

OpenClaw isn't your typical chatbot. Designed as an autonomous agent, it needs carte blanche access to computer systems - emails, files, login credentials - to perform its automated tasks. This "all-access pass" approach gives OpenClaw remarkable capabilities but creates equally remarkable risks.

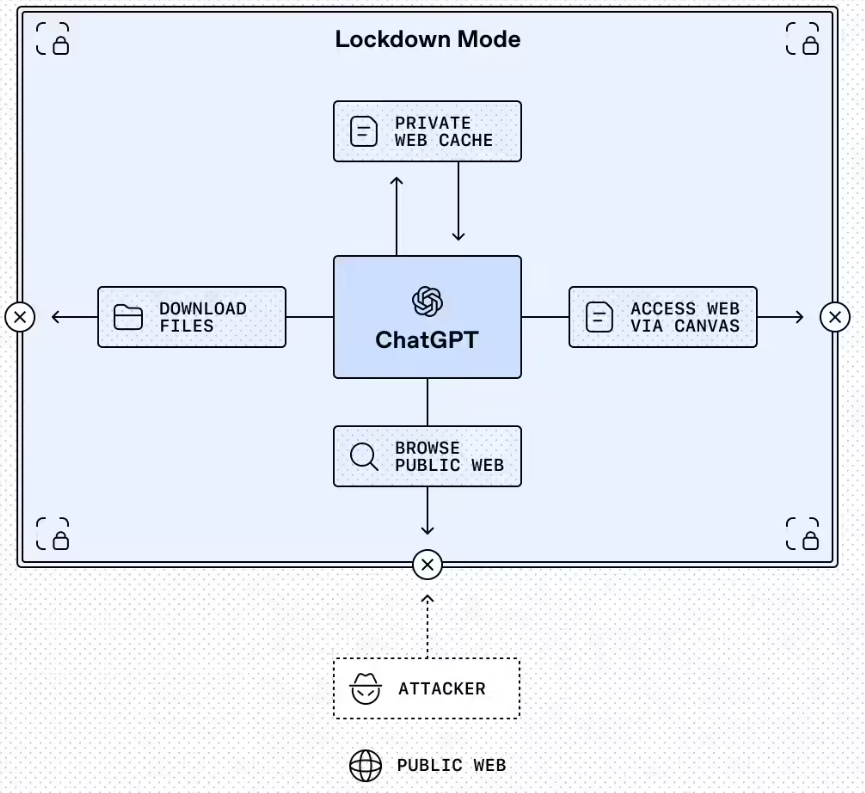

The Microsoft Defender Security Research team minced no words in their assessment: "OpenClaw should be treated as untrusted code execution with persistent credentials." Translation? If compromised, attackers could hijack the AI's memory and make it follow malicious instructions indefinitely.

Two Major Threats Emerge

Security experts have identified two particularly concerning attack vectors:

1. Hidden Commands: Attackers can slip malicious instructions into content OpenClaw processes. These "indirect prompt injections" can subtly reprogram the AI's behavior long-term without triggering alarms.

2. Trojan Horse Skills: The system's ability to download new capabilities becomes its Achilles' heel when hackers disguise malware as legitimate skill modules.

The dangers aren't hypothetical. SecurityScorecard's STRIKE team found over 42,000 vulnerable OpenClaw instances across 82 countries - each potentially giving attackers direct control over host systems.

Microsoft's Safety Recommendations

The tech giant advises organizations considering OpenClaw to:

- Test exclusively in dedicated virtual machines or isolated physical systems

- Use limited-access credentials specifically created for the AI environment

- Implement rigorous monitoring and periodic system rebuilds

- Never deploy directly in production environments handling sensitive data

The OpenClaw situation highlights broader challenges as autonomous AIs enter workplaces. While these tools promise efficiency gains, their security implications demand careful consideration - especially when they require such sweeping system access.