Kling AI's O1 Model Transforms Video Creation with Simple Prompts

Kling AI's O1 Model Revolutionizes Video Generation

The artificial intelligence landscape just got more interesting with Kling AI's public launch of its O1 video generation model. Unlike conventional systems that require multiple steps, this innovative tool lets creators produce videos from simple text prompts - no technical expertise required.

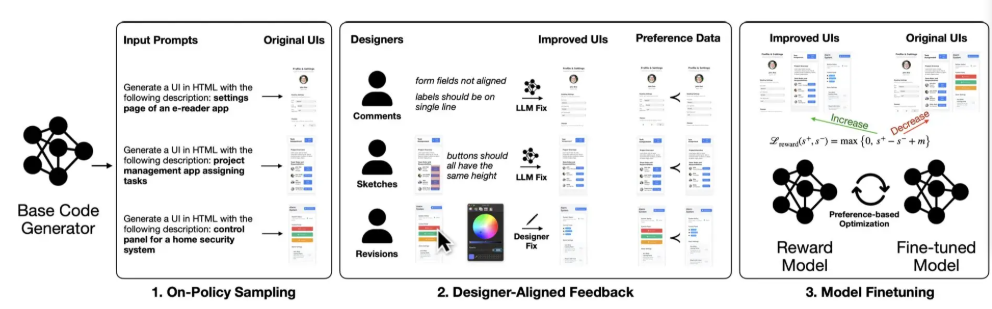

Unified Multimodal Approach

What sets O1 apart is its MVL (Multimodal Vision Language) architecture, which seamlessly integrates text, images and video processing into a single interface. "Imagine describing your vision in plain English and watching it come to life," explains a ComfyAI product director. "That's the simplicity we're bringing to professional-grade video creation."

The model introduces Chain-of-Thought reasoning - essentially teaching the AI to 'think through' creative decisions step by step. This approach helps maintain consistency when handling complex scenes with multiple subjects.

Solving Industry Pain Points

One persistent challenge in AI video generation has been 'feature drift' - where characters or objects change unnaturally between shots. Kling AI claims their multi-viewpoint subject construction technology finally cracks this problem by locking onto key visual characteristics.

"It's like having an invisible cinematographer," says the product director. "The system understands spatial relationships and maintains visual continuity automatically."

Accessibility Meets Professional Needs

Currently available through ComfyApp and Kling AI's website, O1 supports:

- 3-10 second video generation (free)

- Text-to-video conversion

- Image-to-video transformation

- Local editing capabilities

- Shot extension features

The company plans to release API access soon, potentially integrating this technology into popular creative platforms. While analysts applaud the lowered barriers to entry, some question whether quality can scale affordably.

"Every technological leap faces skepticism," counters a Kling spokesperson. "We're confident creators will be pleasantly surprised by what they can achieve."

The O1 model is now live for testing - will it redefine how we think about AI-assisted video production? Early adopters may hold the answer.

Key Points:

- Single-prompt operation: Generate videos from text descriptions without switching interfaces

- Consistency breakthroughs: Advanced algorithms prevent common 'feature drift' issues

- Current applications: Ideal for short-form content creators and marketing teams

- Future expansion: API integration coming soon for broader platform compatibility