DeepSeek API Now Handles Million-Token Conversations Like a Human

DeepSeek Levels Up: Now Remembering Million-Token Conversations

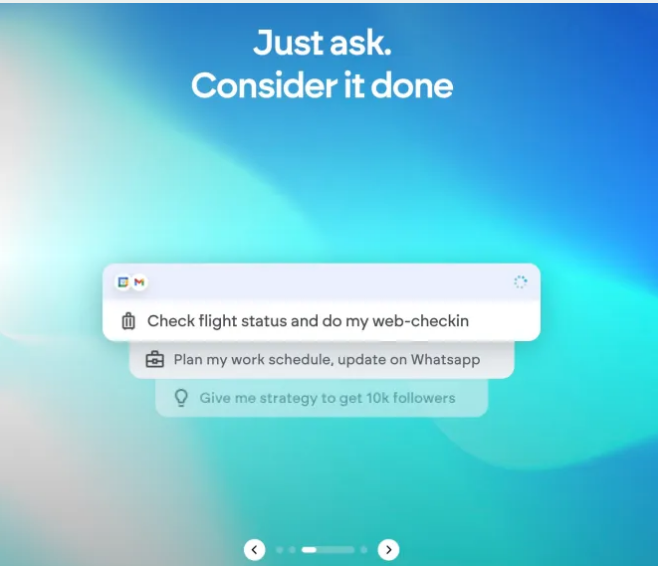

Imagine chatting with an AI that doesn't just respond to your last message, but remembers the entire conversation like a human would. That's exactly what DeepSeek's latest API update delivers, expanding its context window from 128k tokens to a staggering 1 million tokens.

What This Means for Users

The upgrade transforms how we interact with AI assistants. Where previous versions might lose track in lengthy discussions, the new capacity allows for:

- Extended coherence in complex conversations

- Richer contextual understanding across documents

- More natural flow resembling human dialogue patterns

"This isn't just about bigger numbers," explains tech analyst Mark Chen. "It's about creating AI that understands context the way people do - remembering not just what you said last, but how the entire conversation fits together."

Knowledge Gets a Refresh Too

Alongside the memory boost, DeepSeek's knowledge base received a significant update, now covering events through May 2025. This means even without internet access, users can get accurate information on:

- Recent scientific breakthroughs

- Current events up to April 2025

- Evolving cultural and technological trends

The combination of expanded memory and updated knowledge creates what developers are calling "the most context-aware AI assistant currently available."

Current Limitations and Future Plans

The system still has some boundaries worth noting:

- Text and voice only - no image recognition yet

- Specialized queries may still require internet access for very recent information

But change is coming fast. Founder Liang Wenfeng hinted at more developments:

- DeepSeek V4 model launching late April

- New expert mode for complex problem-solving

- Expanded capabilities across professional domains

"We're not just building a better chatbot," Wenfeng noted in a recent interview. "We're creating AI collaborators that can genuinely assist with substantive work."

Key Points

- 7x capacity jump from 128k to 1 million tokens

- Knowledge current through May 2025 for offline use

- Text/voice only - visual input not supported yet

- V4 model coming soon with additional features

- Expert mode available for technical queries