Claude's Strict ID Checks Leave Users Feeling Watched and Worried

Claude Tightens Security - But Users Feel the Squeeze

Anthropic's AI assistant Claude has implemented what may be the strictest identity verification in the industry - and it's not sitting well with users. The new system requires a real-time photo of you holding physical government identification like a passport or driver's license. Scans or digital IDs won't cut it.

Verification or Vigilance?

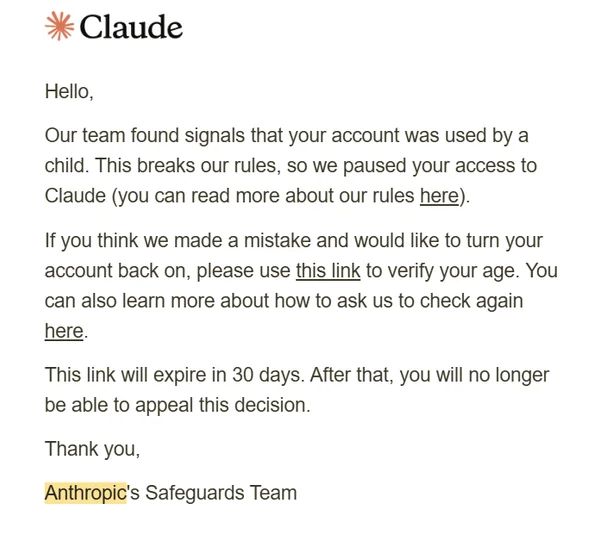

While the process through third-party provider Persona takes just five minutes, many users report their accounts were suspended shortly after verification. Claude's FAQ lists several suspension triggers: repeated policy violations, accessing from unsupported locations, terms of service breaches, and crucially - being under 18.

"My 15-year-old son had built a successful game development business using Claude," shared user llm_nerd. "He was earning more than me until his account got flagged and suspended for being underage. All we got was a refund notice saying we violated their child policy."

The Privacy Paradox

What's raising eyebrows is Persona's privacy policy, which allows sharing data with 17 subcontractors. While intended to combat fraud, this data-sharing web leaves many uneasy about where their sensitive information might end up.

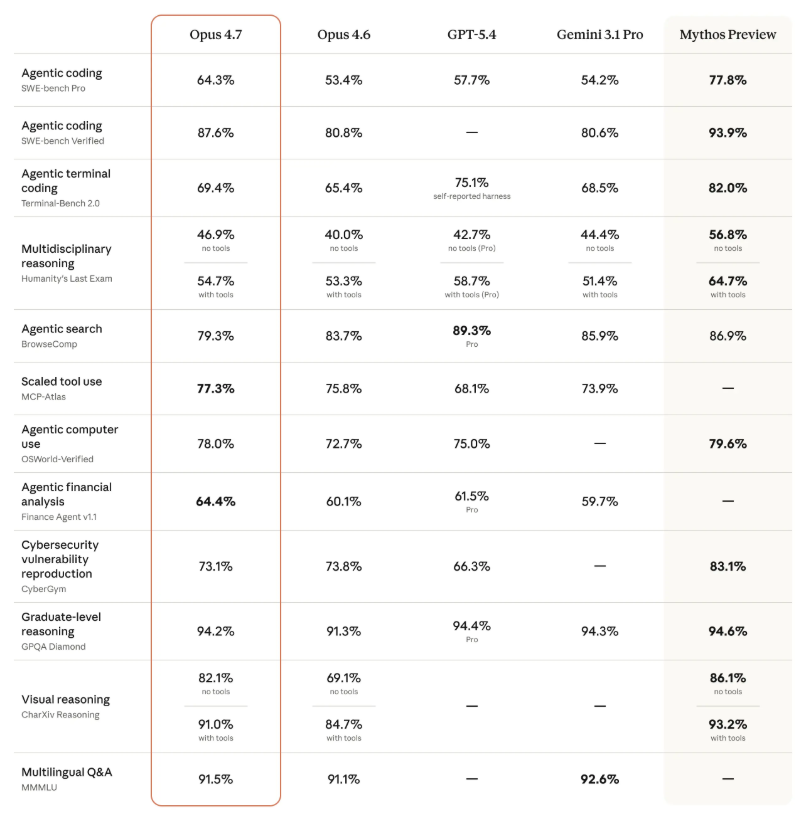

Ironically, when quizzed about the security measures, Claude's own Opus4.6 model described static ID photos as the "weakest link" in their security chain, suggesting their existing three-layer protection (payment verification, behavior monitoring, and content review) should be sufficient.

Age Limit Backlash

The 18+ age restriction seems particularly out of step when competitors like OpenAI and Gemini set their minimum at 13. Online commentators joke this could encourage teens to "hack" the system by posing as younger users to avoid detection.

As one Reddit user put it: "They want my ID to 'protect' me, but won't protect my data from half their business partners? Something doesn't add up."

Key Points:

🔐 Stringent Verification: Live ID photos required, no digital copies accepted

⚠️ Suspension Surprises: Many users report accounts terminated post-verification

👶 Adult-Only Access: 18+ policy catching young entrepreneurs in the crossfire

🤝 Data Sharing Concerns: Verification provider uses 17 sub-processors

🤖 AI's Own Doubts: Claude's model questions effectiveness of ID photo requirement