Claude's ID Check Policy Stirs Privacy Fears and Account Suspension Concerns

Claude's Strict ID Verification Rattles Users

Anthropic, the company behind AI assistant Claude, has rolled out a controversial new identity verification system that's leaving many users uneasy. The policy requires people to submit a real-time photo of themselves holding physical government ID - no digital copies or scans accepted. The verification process, handled by third-party company Persona, takes about five minutes but has become a flashpoint for privacy concerns.

Verification or Suspension Trap?

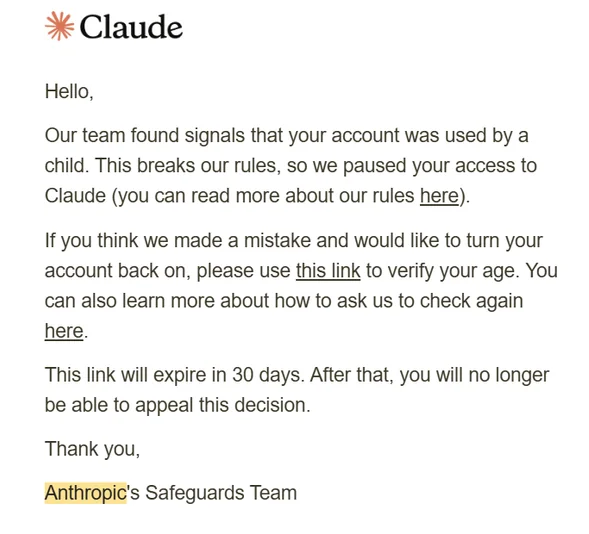

What started as routine security measures has some users calling it a 'gotcha' system. Multiple subscribers report having their accounts suspended shortly after completing verification. Claude's FAQ lists potential suspension reasons including policy violations, accessing from unsupported locations, terms of service breaches, and perhaps most contentious - being under 18 years old.

One user shared how their 15-year-old son, a game developer earning more than his father through Claude projects, suddenly lost access. "We detected your account was used by a child," read the refund notice from Anthropic. The case highlights how the 18+ age restriction - higher than competitors' 13+ policies - is catching legitimate young users in the net.

Data Sharing Concerns Multiply

The privacy policy from Persona reveals verified ID data may be shared with 17 sub-processors to "improve anti-fraud systems." This broad data-sharing approach has security experts questioning whether the verification actually protects users or creates new vulnerabilities.

Ironically, when asked about the policy, Claude's own AI model called static ID photos the "weakest link" in security, noting the company already uses credit card verification, behavior monitoring, and content review systems. The disconnect between what the AI says and what the company implements hasn't gone unnoticed by frustrated users.

Key Points:

🛡️ Strict Verification: Claude now demands live photos with physical IDs, rejecting digital copies

⚠️ Unexpected Bans: Accounts face suspension post-verification, including minors' legitimate uses

🔒 Privacy Questions: User data may be shared with 17 third parties for fraud prevention

🎯 Age Debate: At 18+, Claude's minimum age exceeds competitors' 13+ policies

🤖 AI's Own Doubts: Claude's model questions the effectiveness of ID photo verification