China Targets False Info in Self-Media with AI Labeling Rules

China Cracks Down on Self-Media Misinformation with New AI Regulations

Beijing, July 29, 2025 — The Cyberspace Administration of China (CAC) has initiated a nationwide campaign to address the growing problem of false information disseminated through self-media platforms. The two-month special operation, which began July 24, represents one of the most comprehensive efforts to date to regulate digital content creation and distribution.

Campaign Scope and Objectives

The campaign will be implemented across all provincial-level administrative regions, including the Xinjiang Production and Construction Corps. Authorities aim to tackle misinformation through a dual approach combining technological governance measures with enhanced platform accountability.

Four Key Focus Areas

- Hot Topic Exploitation: Targeting accounts that impersonate individuals involved in trending events or fabricate "authoritative data" in sensitive fields like finance and military affairs.

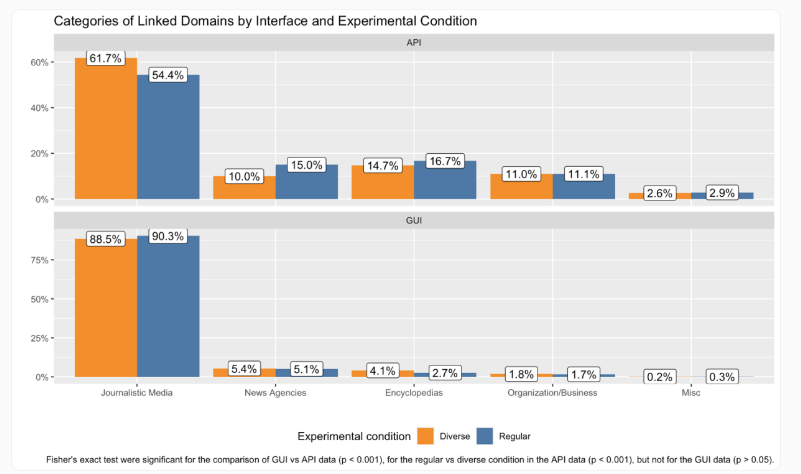

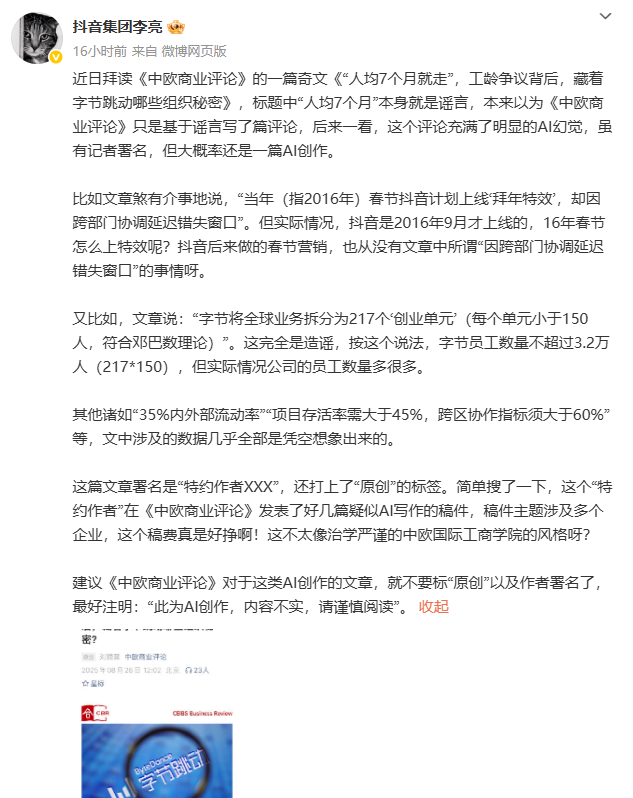

- Data Fraud Techniques: Addressing AI-synthesized fake news, edited footage creating false narratives, and manipulation of trending lists through artificial engagement.

- Labeling Violations: Combating accounts that spread unverified content through obscured sources or use matrix accounts to conceal origins.

- False Expertise: Cracking down on unqualified individuals posing as specialists to promote unconventional theories or create fabricated influencer personas for commercial gain.

Platform Requirements

The CAC has mandated three critical mechanisms for all digital platforms:

- Mandatory source labeling for all content entering recommendation algorithms

- Enhanced verification of professional credentials tied to account activities

- Streamlined reporting systems with graduated penalties ranging from guidance for first-time offenders to permanent bans for malicious actors

Platforms must also strengthen their negative list management and revenue permission systems. Those failing to comply face significant legal consequences.

Long-Term Strategy

An CAC official emphasized the campaign's "treating both symptoms and root causes" approach:

"Through technical measures like improved AI detection and by reinforcing platform responsibilities, we aim to establish sustainable industry standards that prioritize authenticity and professionalism in digital content creation."

The initiative will work in tandem with ongoing supervision efforts while developing a credit evaluation system for self-media operators.

Key Points:

- Two-month national campaign against self-media misinformation begins July 24

- Four primary violation categories identified for enforcement action

- Platforms required to implement new verification and labeling systems

- Combination of technical solutions and regulatory oversight planned

- Long-term goal of establishing industry-wide credibility standards