AI Pioneer Warns: The Party's Over for Bigger, Faster Models

The Crossroads of Artificial Intelligence

When Ilya Sutskever speaks about artificial intelligence, the tech world listens. As OpenAI's former chief scientist and co-founder who now leads Safe Superintelligence, his recent three-thousand-word interview dropped what amounts to a reality check for the AI industry.

Scaling Hits Its Limits

The golden era of throwing more computing power at larger datasets might be ending. "From 2012 through 2020 was our rapid research phase," Sutskever observes. "Then came expansion at scale - bigger models, more parameters." But now? "We're seeing less bang for our compute buck."

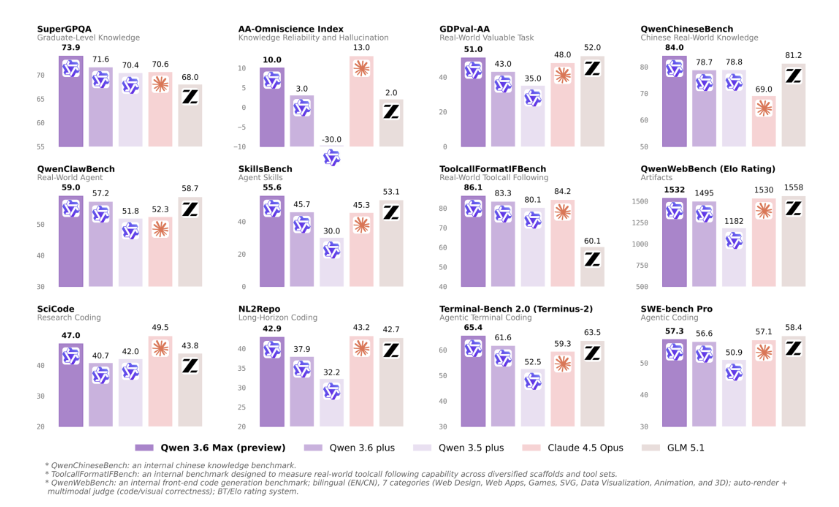

It's an inconvenient truth many researchers whisper about privately but few state publicly: simply making models larger isn't yielding proportional improvements anymore. That blurry line between productive scaling and computational waste suggests we've reached what economists call diminishing marginal returns.

The Generalization Gap

Here's where Sutskever offers his most vivid critique: Current AI models resemble programming competition champions - brilliant within narrow parameters but surprisingly clumsy when faced with messy real-world problems.

"They ace standardized evaluations," he notes, "then fumble practical applications." Why? Reinforcement learning trains on constrained datasets that don't reflect reality's complexity. It's like preparing for a driving test in an empty parking lot - you'll pass the exam but struggle in city traffic.

Emotional Intelligence?

The most provocative suggestion involves emotions - typically considered humanity's least "computational" aspect. Sutskever proposes emotions evolved as decision-making shortcuts that balance competing priorities efficiently.

"Future AI systems," he speculates, "might need emotional analogues to navigate tradeoffs realistically." It's a radical departure from purely rational architectures dominating current designs.

Industry Echoes

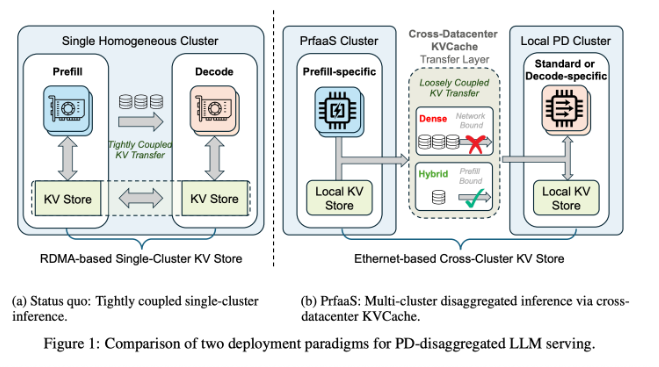

Sutskever isn't alone questioning AI's trajectory. Turing laureate Yann LeCun famously called large language models potential dead ends toward true intelligence. His alternative? Developing "world models" that simulate environments internally before acting - closer to how biological intelligence operates.

The message resonates across research circles: We've squeezed about all we can from current paradigms. Next breakthroughs will require revisiting foundational assumptions rather than just bigger training runs.

Key Points:

- Diminishing returns from model scaling demand new approaches

- Current AI excels in narrow evaluations but struggles with generalization

- Emotion-inspired architectures might improve decision-making

- Leaders advocate shifting focus to fundamental research

- "World models" may offer better paths than pure language approaches