Tongyi Lab's Qwen3.6-Plus Brings Stability to AI Programming

Tongyi Lab Takes AI Programming to New Heights with Qwen3.6-Plus

Just two months after launching its Qwen3.5 series, Tongyi Lab is back with another game-changer. The newly released Qwen3.6-Plus aims to solve one of developers' biggest frustrations: unreliable task execution in agent programming. Available now through Aliyun BaiLian API, this model could change how we think about AI-assisted coding.

What Makes Qwen3.6-Plus Special?

The real magic of this update lies in how it combines deep logical reasoning with massive memory and precise execution. Imagine an AI assistant that doesn't just understand your code but remembers the context and follows through reliably - that's what Tongyi is promising here.

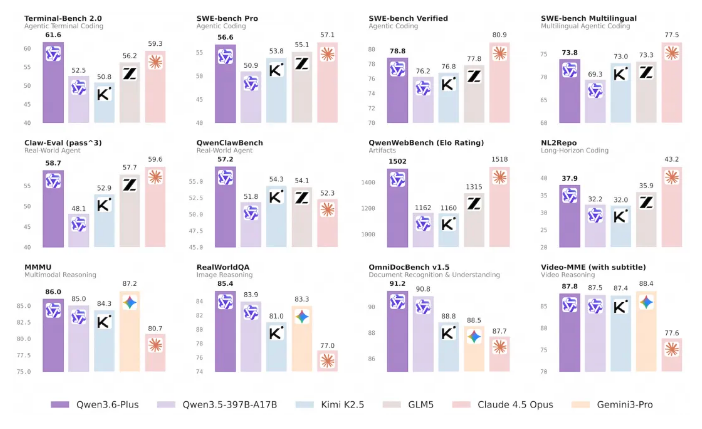

Coding Gets a Major Boost Front-end page generation, code repair, terminal automation - these tasks just got easier. Qwen3.6-Plus claims to be the first domestic model of its size to lead in agent programming while keeping costs down.

Remembering More Than Ever With default support for 1 million characters of context, this model can handle long documents and complex conversations with surprising accuracy. No more losing track of important details halfway through a project.

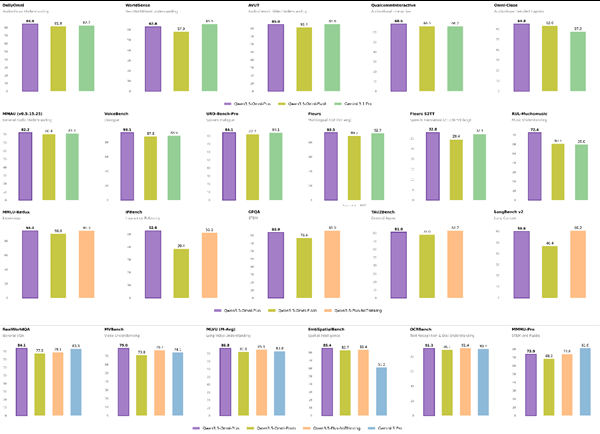

Smaller Size, Bigger Performance Here's something that might surprise you: Qwen3.6-Plus is less than half the size of competitors like K2.5 or GLM5, yet it keeps pace with industry benchmarks in engineering implementation.

Plays Well With Others

Tongyi knows developers don't work in isolation, so they've made sure Qwen3.6-Plus fits right into existing workflows:

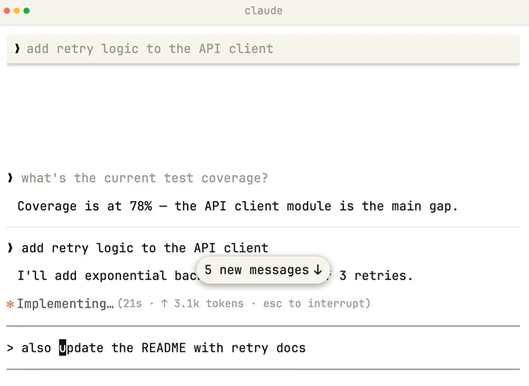

- OpenClaw (the rebranded Moltbot) now offers a complete agent coding experience in your terminal with minimal setup

- Qwen Code, optimized specifically for the Qwen series, tackles complex codebases and automated tasks

- For those using Claude Code, there's good news - the Qwen API now speaks Anthropic protocol too

Seeing Is Believing (And Now Executing)

The multimodal capabilities might be the most exciting part. Qwen3.6-Plus doesn't just see images - it acts on them. Need to calculate winnings from multiple scratch cards? It can do that from visual input alone. Got a design draft? Watch as it generates front-end code automatically.

This "visual agent" capability represents a major step toward AI systems that can continuously perceive and interact with real environments.

For developers working on complex projects, the new preserve_thinking feature could be a game-changer. It keeps track of previous thought chains - perfect for tasks requiring long-term planning.

Tongyi hints this is just the beginning, with more versions of the Qwen3.6 series coming soon, including high-performance and lightweight open-source options.

Key Points:

- Reliable execution addresses a major pain point in agent programming

- 1 million character context improves long document handling

- Surprisingly compact yet powerful compared to competitors

- Visual agent capabilities bridge perception and action

- Seamless integration with popular developer tools