Tencent's Hunyuan 3.0 AI Model Shows Major Programming Leap

Tencent Takes AI Programming to New Heights with Hunyuan 3.0

Tencent has just rolled out its most advanced AI model yet - Hunyuan 3.0 (Hy3) - marking a significant milestone in the company's artificial intelligence development. What makes this release particularly exciting? A dramatic leap in programming capabilities that could reshape how developers interact with AI tools.

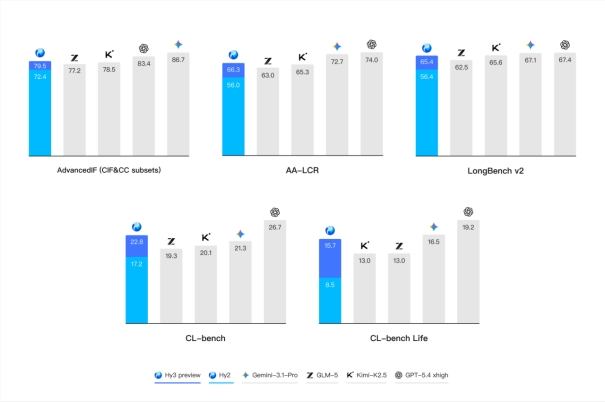

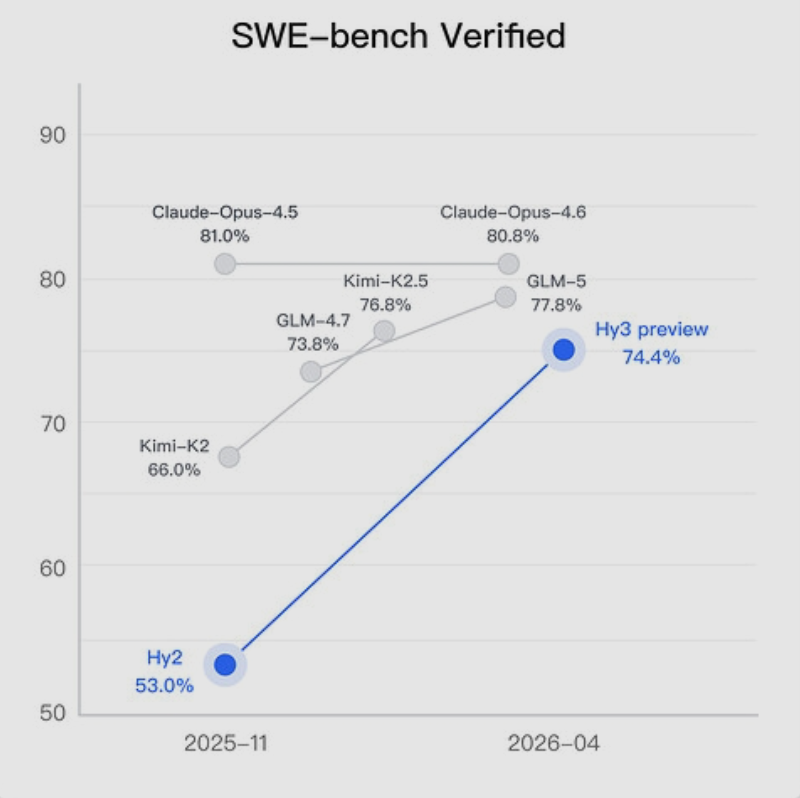

From 53% to 74%: The Programming Power Surge

The numbers tell an impressive story. In standardized SWE-Bench programming tests, Hunyuan 3.0 scored 74.4%, a massive jump from its predecessor's 53% performance. That's more than a 40% improvement - the kind of progress that gets developers' attention.

This boost puts Hy3 within striking distance of industry leaders like GLM-4.7, though it still trails the cutting-edge GLM-5 model. For Chinese-developed AI, these results represent some of the strongest programming capabilities available today.

Under the Hood: What Makes Hy3 Special?

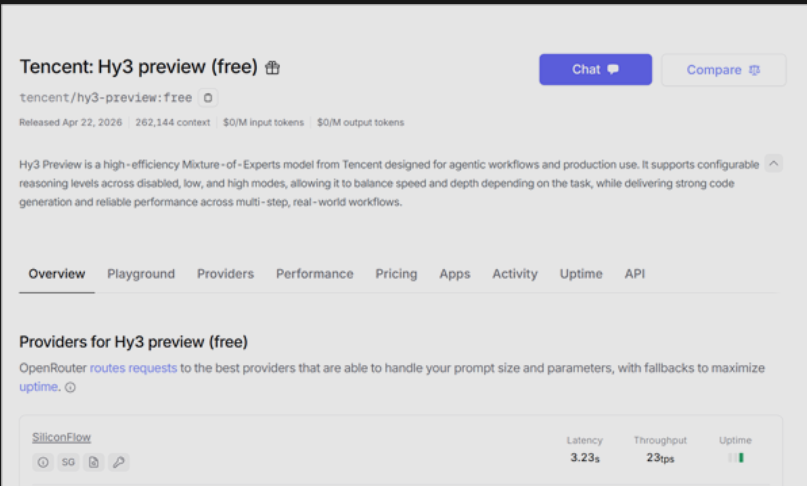

Tencent engineers built Hunyuan 3.0 using a MoE (Mixture of Experts) architecture that allows for more efficient processing. The model can handle context windows up to an impressive 262K tokens while maintaining a speedy output rate of 23 tokens per second.

"The multimodal enhancements really change how the model handles complex tasks," explains one early tester who requested anonymity due to company policies. "It's not just about raw speed - there's better understanding and more nuanced responses."

The Talent Behind the Tech

The development team received a significant boost with the addition of AI specialist Yao Shunyu, poached from another major tech firm (rumored to be Alibaba). Industry watchers credit Yao's expertise with helping accelerate Tencent's AI progress.

Joining the AI Arms Race

Tencent's timing couldn't be more strategic. As competitors like DeepSeek roll out their V4 models, and OpenAI continues pushing boundaries, Hunyuan 3.0 represents Tencent's bid to stay relevant in an increasingly crowded field.

The company is currently offering free access to Hy3preview on openrouter, giving developers a chance to test-drive these new capabilities firsthand.

Key Points:

- 40% performance jump in programming tests over previous version

- 74.4% score on SWE-Bench benchmark (vs previous 53%)

- MoE architecture enables efficient processing of large contexts (up to 262K tokens)

- 23 token/s output speed balances responsiveness with quality

- Free preview available as Hy3preview on openrouter