Security Shock: How AI Relays Could Be Hijacking Your Chatbots

The Hidden Danger in Your AI Assistant's Plumbing

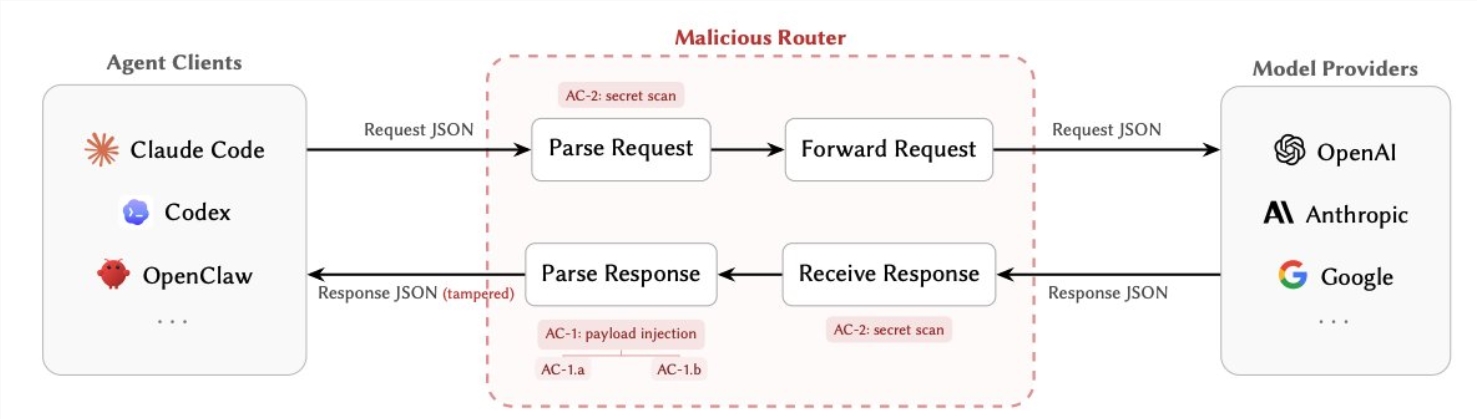

Security researcher Chaofan, previously known for exposing vulnerabilities in AI systems, has uncovered what experts are calling one of the most concerning security flaws in today's AI ecosystem. The vulnerability lies not in the AI models themselves, but in the relay services that shuttle data between users and AI providers.

How Attackers Hijack the Middlemen

Most AI applications rely on these relay services - essentially digital middlemen - to manage communications with large language models. What developers didn't anticipate was how these relays could become perfect attack vectors.

"These routers see everything in plain text," explains Chaofan. "Every API key, every private credential, every instruction you send to your AI assistant - it all passes through their hands."

Two primary attack methods emerged:

- Silent Tampering: Malicious routers can subtly alter AI responses, redirecting tool calls to attacker-controlled servers. This allows everything from data theft to full system takeovers.

- Credential Harvesting: Some routers simply scan traffic for valuable keys and passwords, collecting them passively without triggering any alarms.

The scariest part? These attacks can be programmed to activate only under specific conditions, making them incredibly hard to detect.

The Shocking Numbers

The research team's findings paint a disturbing picture:

- 9 out of 28 tested paid routers contained active malicious code

- One attack resulted in a $5 million cryptocurrency theft

- Over 2.1 billion AI interactions were exposed to potential tampering

- Nearly 100 real credentials were captured during testing

"We expected some vulnerabilities," admits one team member, "but the scale of exposure we found was frankly terrifying."

Why This Changes Everything

Traditionally, AI security focused on protecting against prompt injections or securing model outputs. This research shifts attention to the invisible infrastructure that makes modern AI possible.

"It's like securing your front door while leaving the back window wide open," explains cybersecurity expert Dr. Elena Petrov. "These relay services have become the perfect blind spot for attackers."

The problem is compounded by the unregulated nature of many relay services, particularly free or low-cost options popular with smaller developers.

Protecting Your Systems

For developers and businesses using AI assistants, the researchers recommend:

- Bypass relays when possible, connecting directly to AI providers

- Implement end-to-end encryption for all AI communications

- Monitor tool calls for unusual patterns or destinations

- Rotate API keys frequently to limit exposure

As AI becomes more integrated into business operations, this research serves as a wake-up call about the hidden risks in our AI infrastructure. The team's full findings will be presented at next month's Cybersecurity and AI Conference in Berlin.

Key Points

- Third-party AI relay services contain critical security vulnerabilities

- Attackers can silently modify AI behavior or steal credentials through these services

- Over 2.1 billion AI interactions were found to be potentially exposed

- Developers should minimize reliance on third-party relays and implement additional security measures