OpenAI Delays AI Agent Launch Due to Security Concerns

OpenAI, a leader in artificial intelligence development, has delayed the release of its highly anticipated AI agent due to concerns about 'prompt injection' attacks. While companies like Microsoft and Anthropic have already launched their own AI agents, OpenAI is focusing on improving security to avoid the risks posed by these attacks.

What are 'Prompt Injection' Attacks?

A 'prompt injection' attack occurs when a malicious actor manipulates an AI model to accept harmful instructions. This can result in unintended consequences, such as the AI agent visiting dangerous websites, forgetting commands, or even leaking sensitive personal information. In extreme cases, an attacker could use the AI to access private data, including email or credit card details.

The Growing Risks of AI Autonomy

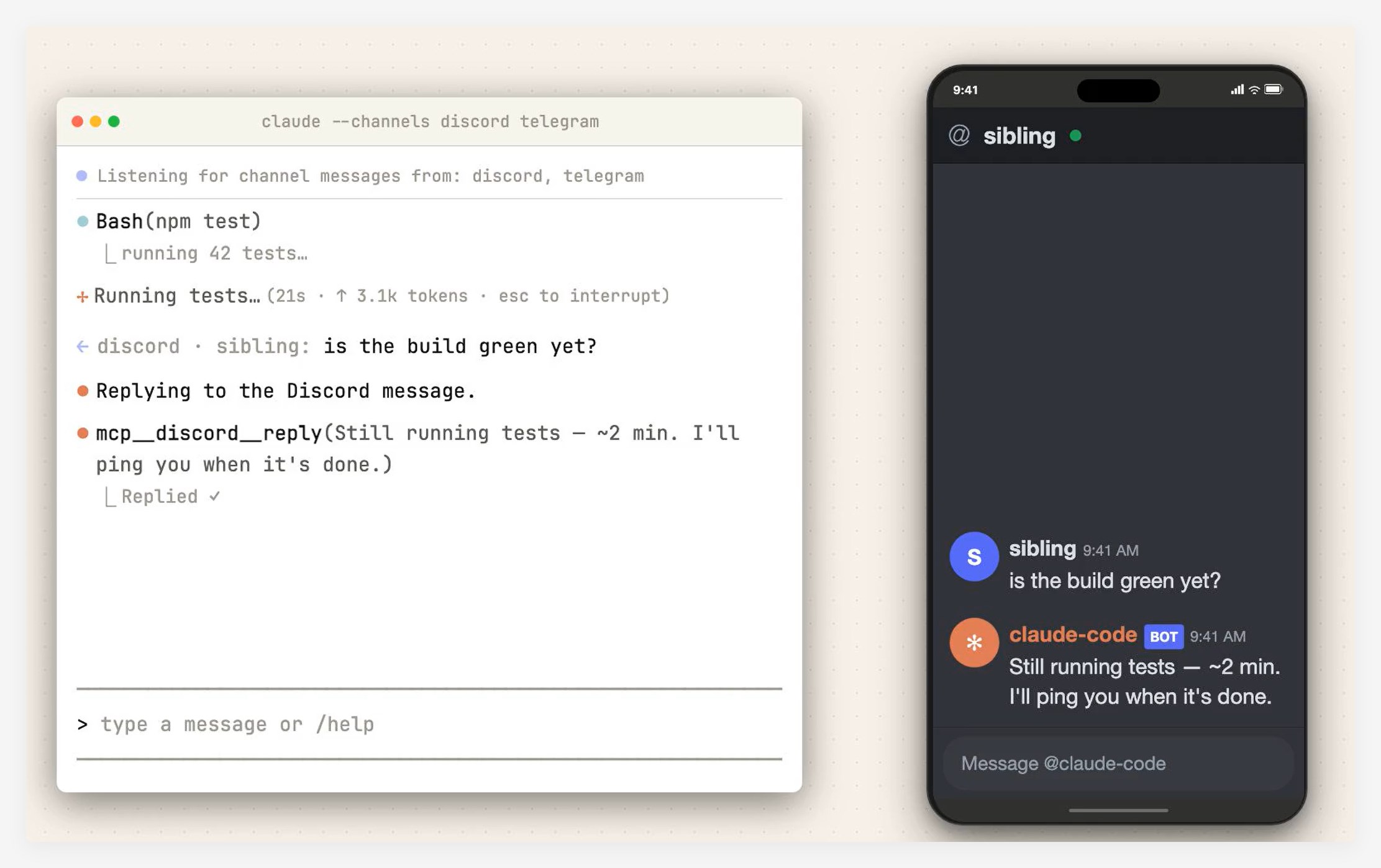

AI agents, which are capable of autonomously interacting with the environment and performing tasks without human input, present significant security challenges. Due to their ability to control computers and access sensitive data, AI agents are increasingly seen as targets for cyberattacks. One OpenAI employee highlighted that while large language models (LLMs) like ChatGPT are inherently vulnerable to attacks, the autonomous capabilities of AI agents exacerbate the risks.

Previous Security Issues in AI Systems

The prompt injection risk is not theoretical. Last year, a security researcher demonstrated how Microsoft's Copilot AI could be manipulated to leak sensitive organizational data, including emails and financial records. Additionally, the researcher was able to get the Copilot AI to compose emails in the style of other employees, further exposing vulnerabilities in the system.

OpenAI's own ChatGPT has also faced similar security issues. A researcher was able to inject false 'memories' into the system by uploading third-party files, such as Word documents. These types of attacks underscore the vulnerabilities that OpenAI faces as it works to refine its AI agent's security measures.

OpenAI's Response to the Risks

As OpenAI works to address these security concerns, the company has expressed surprise at how Anthropic has approached the issue. Anthropic, another AI development company, has taken a relatively hands-off approach, merely recommending that developers isolate their AI agents from sensitive data. OpenAI, in contrast, is taking a more cautious stance, emphasizing the importance of rigorous security measures to ensure that its AI agents are safe to use.

Reports suggest that OpenAI may be ready to launch its AI agent later this month. However, the timeline for this release raises questions about whether the company will be able to implement sufficient safeguards against potential attacks.

Key Points

- OpenAI has delayed its AI agent launch due to concerns about 'prompt injection' attacks that could compromise user data.

- Other companies like Microsoft and Anthropic have already released AI agents, but security vulnerabilities remain a significant concern.

- OpenAI is working to strengthen its security measures in response to these risks, aiming to prevent potential data breaches.