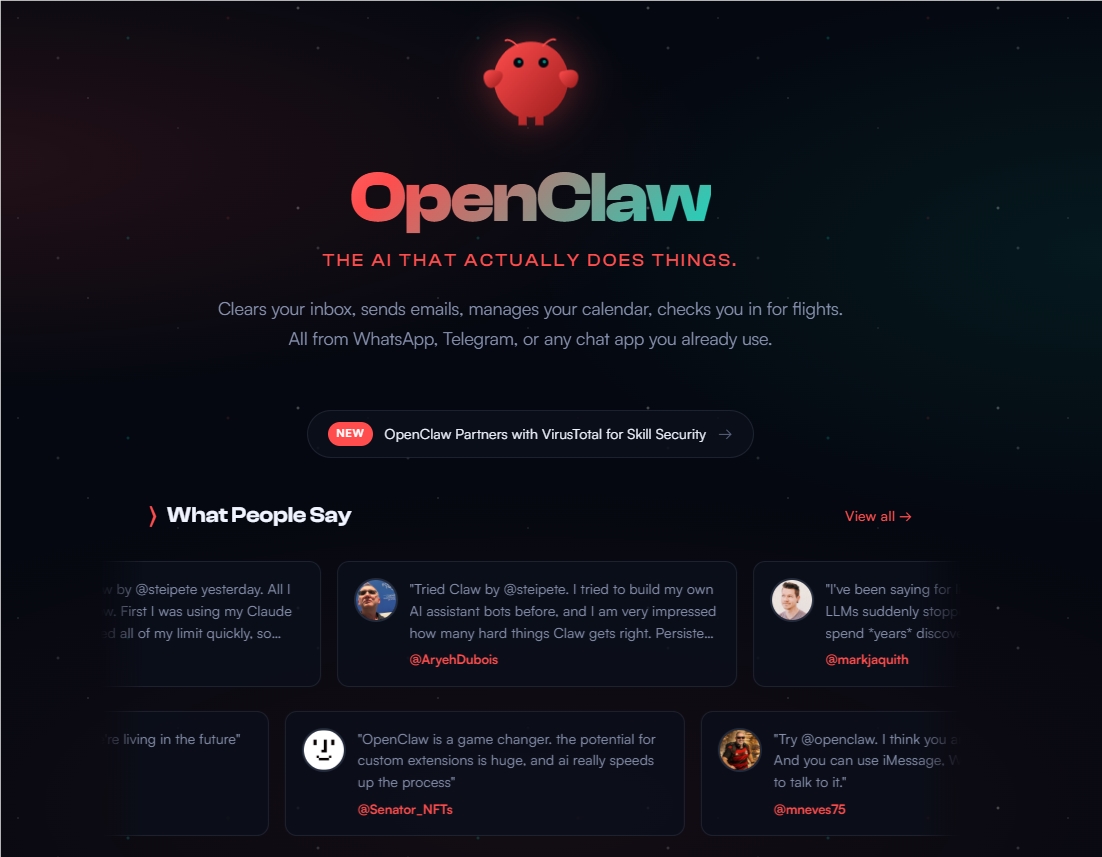

NVIDIA's Huang Calls OpenClaw a Game-Changer After Record Adoption

NVIDIA CEO Hails OpenClaw as Historic Leap Forward

Speaking at Morgan Stanley's annual technology conference, NVIDIA CEO Jensen Huang didn't hold back his enthusiasm for OpenClaw, calling it "the most significant software release of our generation." What caught everyone's attention wasn't just the praise, but the staggering comparison: while Linux took three decades to achieve widespread adoption, OpenClaw matched that trajectory in just 21 days.

"We're witnessing something extraordinary," Huang told attendees. "OpenClaw isn't just growing—it's rewriting the rules of how quickly transformative technology can spread."

The Five-Layer Cake Theory

The visionary executive used his platform to introduce what he termed the "five-layer cake" model of AI infrastructure:

- Energy foundation (powering everything)

- Chip and computing layer (hardware backbone)

- Cloud data centers (distributed processing)

- AI models (the brains)

- Applications (where value gets created)

Huang emphasized that while all layers matter, it's the application layer where companies see real returns. "Agentic AI like OpenClaw creates tremendous value by automating complex human workflows," he explained. "But there's a catch—these systems consume computing resources at unprecedented rates."

The Compute Vacuum Challenge

The breakthrough comes with growing pains. According to Huang, modern agentic AI systems require about 1,000 times more processing power than traditional models due to their ability to handle extended contexts—what tech insiders call "long-context processing."

"We've essentially created a compute vacuum," Huang admitted. "The demand is outstripping even our most optimistic projections."

NVIDIA's Hardware Response

The chipmaker isn't sitting idle. Huang revealed details about NVIDIA's upcoming Vera Rubin architecture specifically designed to address these bottlenecks:

- Enhanced onboard memory components

- Optimized ICMS platform integration

- Specialized circuits for long-context workloads

"Our engineers are working around the clock," Huang shared. "The Vera Rubin chips won't just meet current needs—they'll anticipate where agentic AI is heading next."

The announcement sent ripples through the tech community, with analysts scrambling to adjust their forecasts for both software adoption and hardware requirements in this new era of hyper-efficient AI agents.

Key Points:

- OpenClaw achieved Linux-level adoption in weeks rather than decades

- Huang introduced his "five-layer cake" model of AI infrastructure

- Agentic AI creates massive compute demands due to long-context processing

- NVIDIA developing Vera Rubin architecture specifically for these workloads