New York Moves to Ban AI Doctors and Lawyers

New York Takes Aim at AI Medical and Legal Advice

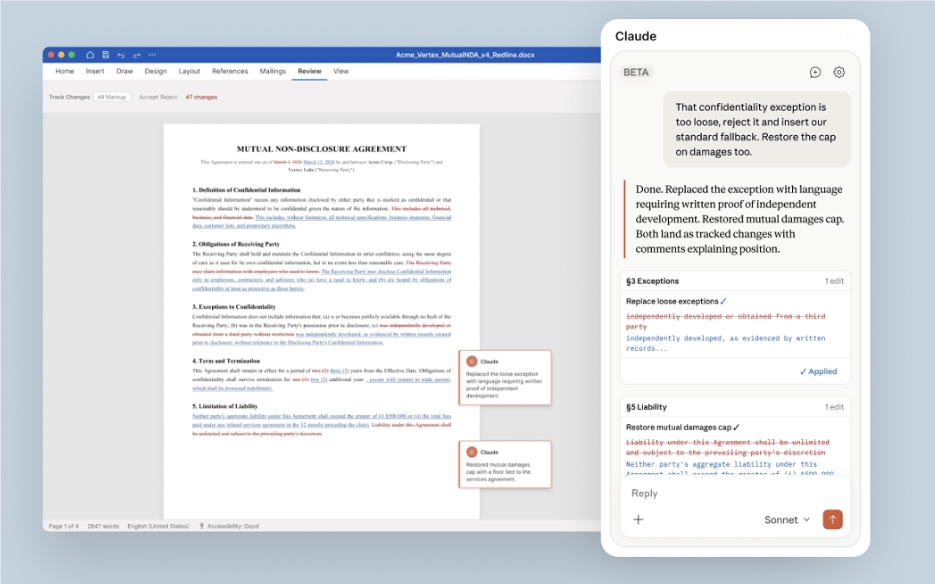

When your chatbot starts diagnosing illnesses or drafting legal contracts, regulators take notice. New York State is advancing groundbreaking legislation that would prohibit AI systems from providing substantial medical or legal advice to consumers.

The Proposed Crackdown

The bill, designated S7263, targets what lawmakers call "AI impersonation" of licensed professionals. Sponsored by the Senate Committee on Internet and Technology, it specifically bans:

- Medical diagnosis or treatment recommendations without human oversight

- Legal counsel beyond basic informational responses

- Failure to disclose when users are interacting with artificial intelligence

"People deserve care from actual humans," emphasized Senator Kristin Gonzalez, referencing recent tragic cases involving minors and AI platforms. Earlier this year, Google settled lawsuits alleging its Character.AI product contributed to teen suicides.

What's At Stake?

The legislation introduces strict new requirements:

Mandatory Warnings: Platforms must display "clear and prominent" notices about their AI nature—no fine print allowed.

No Liability Shields: Even with warnings, companies remain responsible for harmful advice their bots provide.

User Recourse: Consumers gain explicit rights to sue over botched AI guidance.

Industry Implications

The bill signals a turning point for generative AI applications moving into regulated professions. If passed after the current legislative session, companies would have just 90 days to comply—potentially forcing major changes in how chatbots operate.

The debate reflects growing concerns about balancing innovation with public protection. While AI can democratize access to information, lawmakers argue some fields require human judgment and accountability that algorithms can't provide.

Key Points:

- New York's S7263 bill would ban substantive medical/legal advice from AI systems

- Requires unmistakable disclosures when users interact with chatbots

- Maintains company liability regardless of warnings displayed

- Comes amid heightened scrutiny following tragic cases involving vulnerable users

- Would take effect 90 days after signing if approved