Moonshot's K2.6 AI Model Breaks New Ground in Coding and Agent Tasks

Moonshot's Latest AI Model Raises the Bar for Coding Assistants

In a move that could reshape how developers work with AI, Moonshot AI has launched Kimi K2.6—a model that doesn't just talk about coding but actually rolls up its sleeves for marathon programming sessions.

Breaking Through Performance Barriers

The numbers tell an impressive story: K2.6 can maintain coding tasks for up to 13 hours straight while accurately handling over 4,000 lines of code modifications in a single session. That's like having a tireless programming partner who never needs coffee breaks.

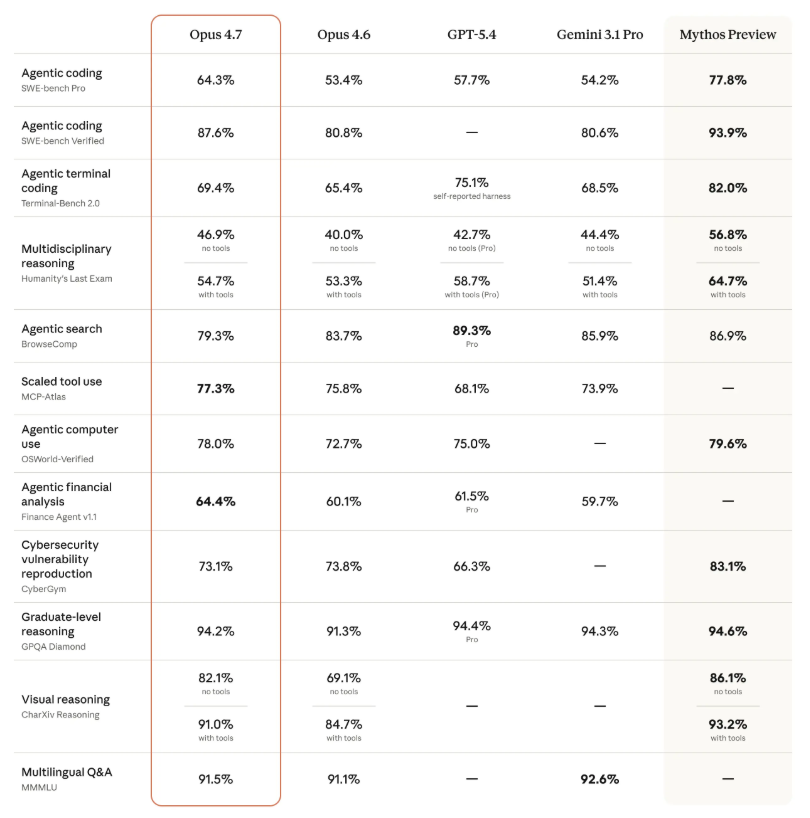

Benchmark results show the model holding its own against premium offerings from OpenAI (GPT-5.4), Anthropic (Claude Opus 4.6), and Google (Gemini 3.1 Pro). In some specialized tests like SWE-Bench Pro (measuring real-world software engineering skills) and DeepSearchQA (evaluating agent search depth), it even pulls ahead.

More Than Just Code Generation

What sets K2.6 apart isn't just raw coding power—it's how the model coordinates with other AI agents to tackle complex workflows. Imagine a team of specialized digital assistants working seamlessly together on different aspects of a project.

"We're seeing the shift from AI that converses to AI that executes," explains Moonshot's chief technology officer during the launch event. "K2.6 represents our vision for practical, production-ready artificial intelligence."

Ready for Real-World Use

The model is already available through:

- Web interface

- Updated mobile applications

- Developer APIs

The company has also upgraded its Kimi Code programming assistant with the new technology stack.

As large language models evolve beyond simple question-answering into true productivity tools, Moonshot's latest release suggests Chinese AI developers aren't just keeping pace—they're helping set the tempo.

Key Points:

- Marathon coder: Handles 13-hour programming sessions without performance degradation

- Bulk editing: Processes >4,000 lines of code modifications at once

- Benchmark beater: Competes with or exceeds top closed-source models in key tests

- Agent teamwork: Excels at coordinating multiple specialized AI assistants

- Available now: Accessible via web, mobile apps, and developer APIs