LTX-2 Opens New Era for AI Video Creation

AI Video Generation Just Got a Major Upgrade

The digital creation landscape shifted dramatically this week with Lightricks' release of LTX-2, the first complete open-source audio-visual foundation model. This isn't just another incremental improvement—it's a game-changer that puts Hollywood-quality video generation within reach of everyday creators.

The Open-Source Revolution

Imagine having access to:

- Full model weights

- Complete training code

- Benchmark tests

- Ready-to-use toolkits

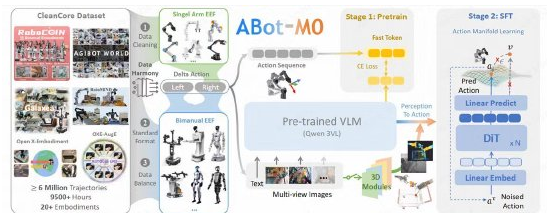

All hosted on GitHub and Hugging Face for anyone to explore. The DiT hybrid architecture powers features like text-to-video conversion, multi-keyframe control, and even 3D camera logic. What really excites developers? ComfyUI offered native support from day one, slashing the learning curve.

Seeing and Hearing Perfection Simultaneously

Traditional models force creators to stitch audio and video separately—a tedious process that often results in awkward mismatches. LTX-2 breaks this mold by generating synchronized visuals and sound in a single pass. The results? Natural lip movements, perfectly timed sound effects, and seamless music integration at native 4K resolution.

Early testers report remarkably lifelike dialogue scenes where every eyebrow raise matches the voice inflection. Skin textures show pores rather than plastic-looking surfaces, while motion flows smoothly at up to 50fps.

Performance That Surprises

The engineering team squeezed impressive efficiency from LTX-2:

- 50% lower computational costs than previous versions

- Multi-GPU support for longer sequences

- Quantized versions that run on RTX 40 series cards

The kicker? Generating a 20-second clip takes just minutes—fast enough for real-time previews during creative sessions.

Creative Possibilities Unleashed

From indie filmmakers crafting storyboards to marketers producing quick-turnaround ads, LTX-2 opens doors previously reserved for big studios. Its video-to-video controls (Canny, Depth, Pose) combine with keyframe precision to maintain consistent styles across scenes.

The community anticipates an explosion of plugins and LoRA extensions that could transform LTX-2 into the backbone of open-source video generation.

Key Points:

- Complete package: Weights, code, benchmarks all open-sourced

- Seamless sync: Audio and video generated together eliminates post-production headaches

- Accessible power: Runs efficiently on consumer GPUs without enterprise hardware

- Creative control: Multiple input methods (text/images/sketches) suit various workflows

- Future-ready: Architecture designed for community extensions and improvements