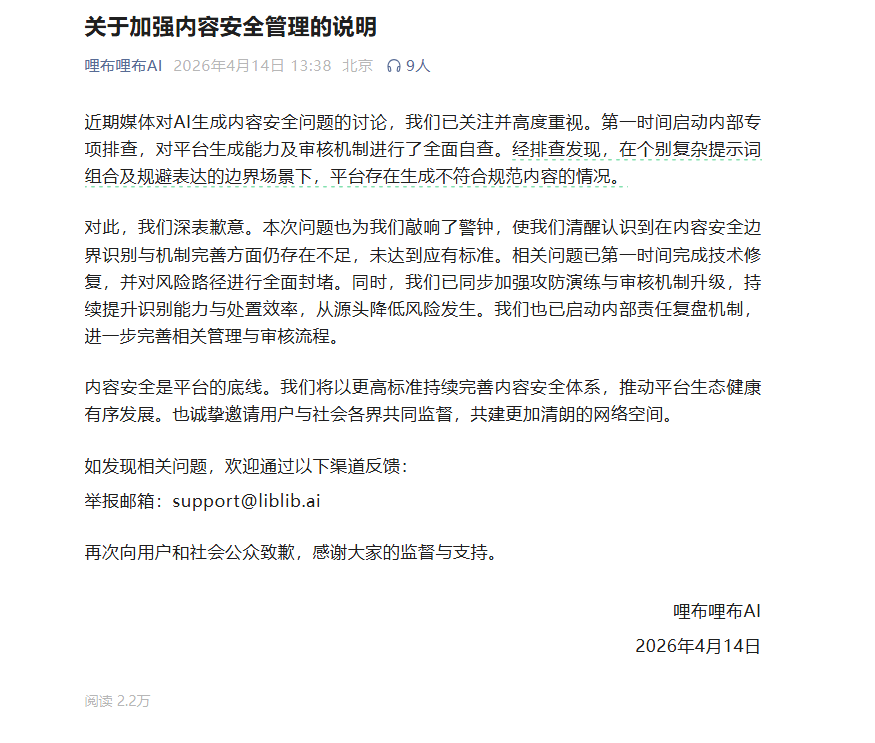

LibuLibu AI addresses content safety concerns with platform upgrades

LibuLibu AI Takes Action on Content Safety

Following growing scrutiny around AI-generated content, LibuLibu AI has stepped forward with concrete measures to address safety concerns. The company's recent statement comes after users and media outlets raised questions about some problematic outputs from its platform.

The acknowledgment didn't come lightly. LibuLibu openly admitted that their system occasionally failed when users employed clever prompt combinations to bypass content restrictions. "We recognize our filters weren't perfect," the statement read, "especially in edge cases where users intentionally tested boundaries."

Technical Fixes Implemented

The AI company has been busy behind the scenes. Engineers have:

- Patched all identified technical vulnerabilities

- Closed loopholes that allowed questionable content through

- Strengthened penetration testing to catch more edge cases

But technical solutions alone weren't enough. The platform has completely overhauled its review process, implementing what they call a "multi-layered defense" against problematic content.

A Broader Industry Challenge

This incident sheds light on a larger issue facing AI companies. As LibuLibu's statement put it: "Content safety isn't just our challenge—it's the industry's growing pain." The company has established an internal review team to examine how these slips occurred and prevent future occurrences.

Interestingly, they've also opened their doors to public oversight, inviting users to report concerns directly to their support team. This move toward transparency suggests the company understands trust must be earned in this sensitive field.

What This Means for AI Development

The LibuLibu situation underscores how quickly the AI landscape is evolving. Just a year ago, most platforms focused primarily on functionality. Now, content moderation and ethical considerations are taking center stage.

Industry observers note this reflects a broader trend toward responsible AI development. As one analyst commented, "The wild west days of AI are ending. Platforms now face real pressure to get content safety right."

Key Points:

- LibuLibu AI has fixed technical issues that allowed questionable content

- The platform upgraded its review system with stronger safeguards

- Company acknowledges ongoing challenges in content moderation

- Incident highlights industry-wide shift toward stricter content policies

- Public reporting system established for additional oversight