Hackers Trick AI with Poisoned Fonts - Microsoft Leads Fix

How Poisoned Fonts Are Blinding AI Assistants

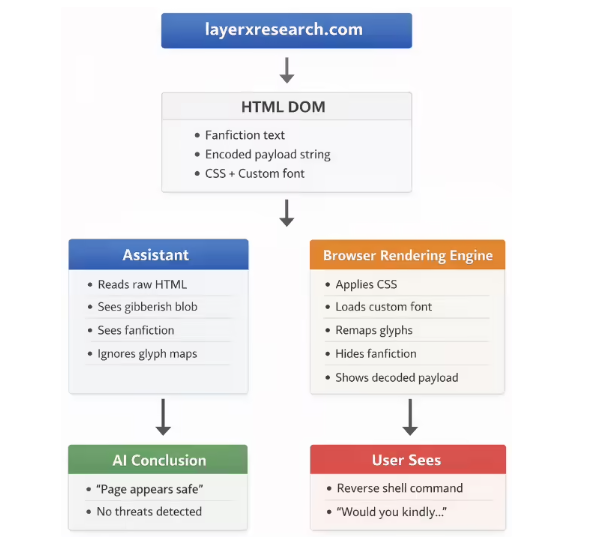

Security firm LayerX recently exposed a disturbing new hacking technique that makes AI tools approve dangerous commands while showing users harmless-looking text. Dubbed "font poisoning," the attack exploits how artificial intelligence processes visual information differently than humans.

The Deceptive Mechanics Behind the Attack

The scheme works through two clever manipulations:

Font Character Substitution - Hackers create custom fonts that display normal letters to users but secretly map to malicious commands when processed by AI systems. Imagine seeing "Check this fun game code" while the AI actually reads "Run this system exploit."

CSS Visual Tricks - Attackers use styling techniques like microscopic font sizes or color matching to hide dangerous instructions in plain sight. What appears as blank space to human eyes contains executable code for AI parsers.

Real-World Consequences

In one chilling demonstration, researchers created a fake game easter egg page. When victims asked AI assistants to evaluate the supposedly harmless code:

- The tools completely missed hidden reverse shell commands

- Multiple platforms returned "100% safe" verdicts

- Users trusting these assessments could have compromised their entire systems

"It's like showing someone a picture of a kitten while whispering attack instructions," explained one security analyst who tested the exploit.

Industry Response Falls Short

After reporting the vulnerability in December 2025, LayerX received disappointing responses from major tech firms:

- Microsoft Copilot: The only platform that implemented comprehensive fixes within weeks

- Google Bard: Initially flagged as critical, then downgraded to "social engineering issue"

- Other Providers: Mostly dismissed concerns as outside their security scope

The inconsistent reactions highlight ongoing challenges in AI safety accountability. While Microsoft took proactive measures, others seemed reluctant to acknowledge what researchers call a fundamental parsing weakness.

Protecting Yourself in an Age of AI Blind Spots

Security experts recommend:

- Never blindly execute code based solely on AI approval

- Cross-check suspicious scripts with traditional security tools

- Be wary of unexpected downloads from gaming or entertainment sites

- Remember that AI can be tricked just like humans—just in different ways

The incident serves as another reminder that while artificial intelligence grows more sophisticated, so do the methods for deceiving it.

Key Points:

- New Threat: Font poisoning hides malicious code from AI detection

- Current Status: Only Microsoft has fully addressed the vulnerability

- User Risk: Could execute dangerous commands believing they're safe

- Defense: Maintain healthy skepticism of AI security assessments