Google's Gemma 4: Small AI Models Pack a Big Punch

Google's Gemma 4 AI Models: Big Performance in Small Packages

In a move that could democratize advanced AI, Google has fully open-sourced its Gemma 4 series of artificial intelligence models. What's remarkable isn't just their availability, but their surprising capability - some of these relatively small models are outperforming much larger competitors.

Small Size, Big Results

The star of the show is a model with just 380 million parameters that manages to outshine competitors twenty times its size on industry benchmarks. This breakthrough means powerful AI could soon run smoothly on your smartphone or lightweight laptop without needing cloud connections.

"We're seeing a paradigm shift where size doesn't necessarily determine capability," explains an industry analyst familiar with the technology. "These efficient models open doors for AI applications we couldn't consider before due to hardware limitations."

The Gemma 4 lineup includes several variants:

- gemma-4-E2B: 2.3 billion effective parameters

- gemma-4-E4B: 4.5 billion parameters

- A mixture-of-experts model with 26 billion parameters

- A dense model packing 31 billion parameters

Technical Innovations Driving Performance

Google's engineers achieved these results through several key innovations:

- Layer-wise embedding technology allows smaller models to maintain both speed and knowledge capacity beyond what their size would suggest.

- Hybrid attention architecture combines local sliding windows with global attention, optimizing memory usage during long text processing.

- Special optimization for mobile and IoT devices makes the E2B and E4B models particularly suitable for smartphone applications.

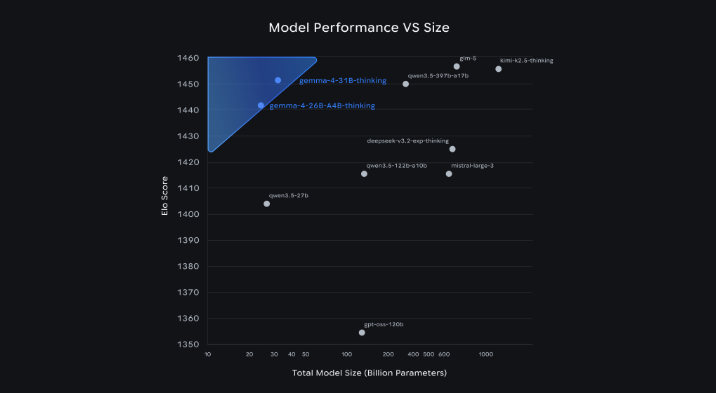

Benchmark Dominance

The numbers speak for themselves - in standardized tests:

- The 31B parameter model ranks third globally among open-source models on the Arena AI text leaderboard

- The 26B parameter mixture-of-experts version holds sixth place

- All models show strong performance in text generation, math reasoning, and coding tasks

Open Access for Developers

Perhaps most exciting for the developer community is Google's decision to release Gemma 4 under the Apache 2.0 license. This allows flexible deployment both locally and in the cloud, supported by mainstream platforms that enable quick application development.

The implications are significant - from smarter smartphone assistants to more responsive IoT devices, these efficient models could bring advanced AI capabilities to everyday technology without requiring expensive hardware upgrades.

Key Points:

- 🚀 Compact powerhouses: Small models outperform much larger competitors

- 📱 Mobile-ready: Optimized versions work efficiently on smartphones and IoT devices

- 🔓 Open access: Apache 2.0 license encourages widespread developer adoption

- 🏆 Proven performance: Strong benchmark results across multiple AI tasks