Google DeepMind's New Training Tech Keeps AI Learning Despite Glitches

Google DeepMind's Breakthrough in Fault-Tolerant AI Training

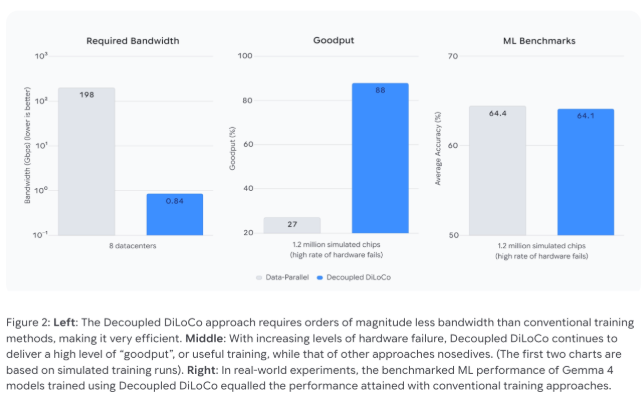

In the high-stakes world of artificial intelligence development, Google DeepMind has tackled one of the most frustrating problems head-on: what happens when your expensive hardware decides to take an unscheduled break? Their answer - a clever new architecture called Decoupled DiLoCo - could change how we train massive AI models.

The Problem With Perfect Synchronization

Traditional AI training methods operate like a perfectly choreographed ballet - every computing unit must move in perfect sync during gradient updates. It's impressive when it works, but as anyone who's dealt with technology knows, perfection rarely lasts. A single hiccup in one component can bring the entire performance to a grinding halt.

Islands of Independence

Decoupled DiLoCo takes a radically different approach by creating what engineers call "computing islands." Picture these as self-contained teams working on different parts of the same project. Each island operates independently, making multiple local calculations before sending compressed updates to a central coordinator.

The magic happens in the asynchronicity. If one island experiences technical difficulties (maybe its TPU got too hot or the network connection dropped), the others simply keep working. No waiting around for stragglers, no system-wide timeouts - just continuous progress.

By the Numbers: Why This Matters

The results speak for themselves:

- 88% utilization maintained even with frequent hardware failures (versus just 27% with traditional methods)

- Bandwidth between data centers slashed from 198 Gbps to less than 1 Gbps

- Older and newer hardware can work together seamlessly

The bandwidth reduction alone is game-changing. Suddenly, global collaboration on AI training becomes practical using existing internet infrastructure rather than requiring specialized high-speed connections.

Built-In Resilience That Would Make Cockroaches Jealous

During stress testing (what engineers charmingly call "chaos engineering"), Decoupled DiLoCo demonstrated an almost uncanny ability to keep going. Even when all learning units temporarily failed simultaneously, the system picked up right where it left off once they came back online.

This resilience extends to hardware diversity too. Different generations of TPU chips can participate in the same training process, giving older equipment new purpose and smoothing transitions during upgrades.

Key Points:

- 🔄 Asynchronous Advantage: Independent computing units prevent single points of failure from derailing entire training processes

- 🌍 Bandwidth Breakthrough: Dramatically reduced network requirements make global distributed training feasible

- ⚡ Hardware Harmony: Mixed generations of processing units can collaborate effectively

- 🧠 Self-Healing Smarts: System automatically recovers from failures without losing progress