DeepMind's Gemini 3 Pro Gets Smarter: New System Instructions Boost AI Reliability

DeepMind Enhances Gemini 3 Pro with Smart New Rules

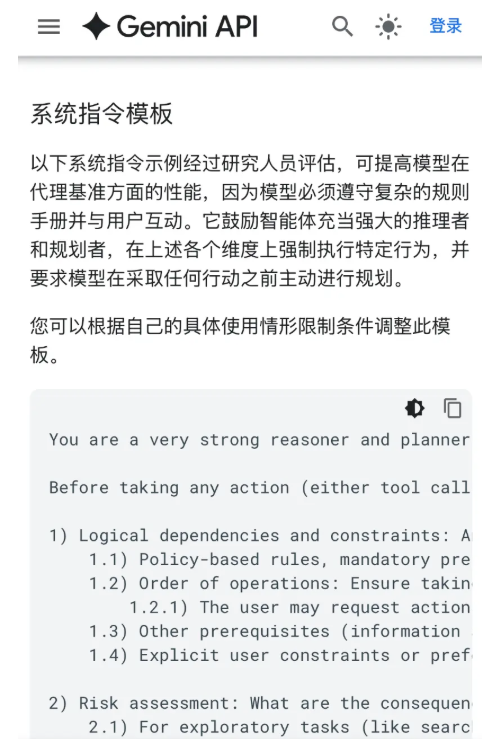

Google's AI research division DeepMind has introduced a powerful set of system instructions for its Gemini 3 Pro model, delivering measurable improvements in how AI handles complex tasks. These aren't your typical programming commands - they're more like giving the AI a better playbook for decision-making.

How It Works

The new instructions force the AI to think through nine critical steps before taking any action:

- Careful Planning: The AI must analyze logical dependencies, potential risks, and alternative hypotheses before responding

- Smart Retries: It now knows when to persist (like with temporary network errors) and when to change strategies

- Better Prioritization: Clear rules help navigate conflicting requirements without getting stuck

- Thorough Verification: The model double-checks its reasoning against all available information sources

"This isn't just about making fewer mistakes," explains a DeepMind spokesperson. "It's about building AI that understands why it succeeded or failed, which leads to more reliable performance over time."

Real-World Improvements

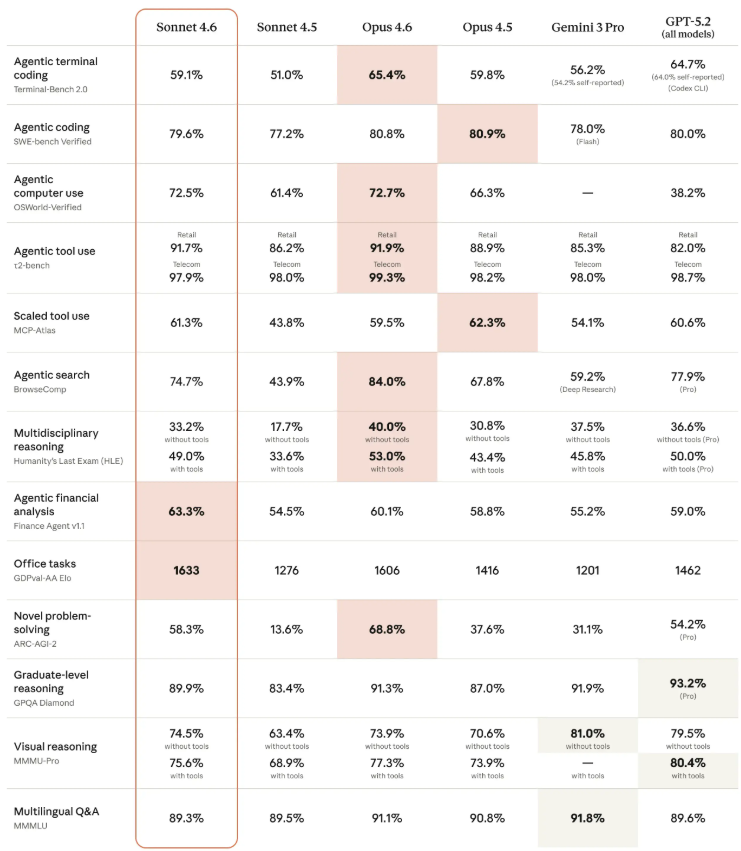

The results speak for themselves:

- Web browsing tasks saw success rates jump from 73.2% to 78.1%

- Multi-tool workflows completed correctly on first try 6.7% more often

- Complex mobile tasks (like ordering food while generating invoices) succeeded 4.8% more frequently

The most dramatic improvement came in reducing errors during web interactions - mistaken clicks dropped by an impressive 35%.

What This Means for Developers

The beauty of this system lies in its simplicity for implementers. Developers don't need to retrain models or overhaul their systems - they can literally copy-paste these instructions into their existing prompts.

DeepMind is currently working on packaging the instructions into a configurable JSON format, with plans to integrate them into popular platforms like Vertex AI by early next year.

For businesses relying on AI assistants, this could mean fewer frustrating dead-ends and more successful task completions - without any additional technical overhead.

Key Points:

- 5% boost in overall task success rates across benchmarks

- 35% reduction in web interaction errors

- No training required - works as prompt engineering

- Coming soon to major AI platforms in early 2026