Claude Shatters AI Memory Limits with Million-Token Breakthrough

Claude's Memory Leap Transforms AI Development

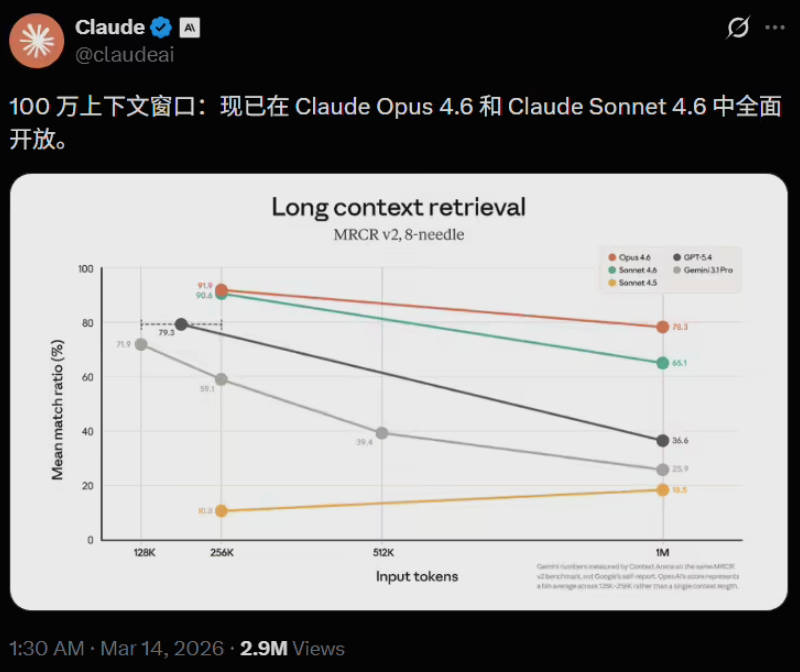

In a move that reshapes the landscape of artificial intelligence, Anthropic has unveiled Claude's unprecedented 1 million token context window. This breakthrough effectively removes previous limitations on how much information AI systems can process and retain simultaneously.

Understanding the Scale

The new capacity translates to roughly 7.5 million English words - enough to digest:

- Seven complete readings of the Harry Potter series

- Multiple large codebases in their entirety

- Extensive legal contracts or technical documentation sets

"This isn't just about bigger numbers," explains an Anthropic engineer. "It's about enabling AI to maintain coherent understanding across projects that previously required painful fragmentation."

Developer Revolution

The implications for software development are profound:

- Whole-project analysis: Developers can now submit entire repositories without manual splitting

- Context preservation: No more losing track of connections between distant code segments

- Documentation digestion: Technical manuals and API references become instantly searchable knowledge bases

The model demonstrates particular strength in "needle-in-haystack" retrieval tests, achieving 78.3% accuracy at locating specific details within massive datasets.

Accessible Power

Perhaps most surprisingly, Anthropic has introduced this capability without premium pricing tiers that typically accompany such advancements. Both Claude Opus4.6 and Sonnet4.6 offer flat-rate access to the full context window.

The move pressures competitors while lowering barriers for individual developers and smaller teams seeking enterprise-grade AI assistance.

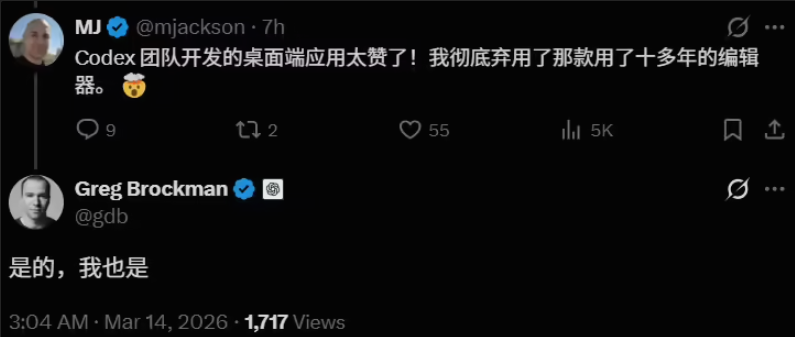

The Future of Programming Roles

The breakthrough accelerates ongoing shifts in developer responsibilities:

Traditional Role -> Emerging Reality:

Code Writer -> System Architect

Debugger -> Quality Supervisor

Syntax Expert -> Business Logic Specialist

The expanded memory capacity enables programmers to operate more like conductors than laborers, orchestrating AI agents rather than crafting every line manually.

The question now becomes: How will competitors respond to this dramatic raising of the stakes?