Claude Mythos Security Claims Under Scrutiny: Only 10 Severe Vulnerabilities Found

The Reality Behind Claude Mythos' Security Claims

Anthropic's recent release of Claude Mythos sent shockwaves through the tech world, with dramatic claims about its ability to uncover security vulnerabilities. But peel back the layers, and the picture looks quite different from the marketing hype.

Questionable Math Behind 'Thousands' of Vulnerabilities

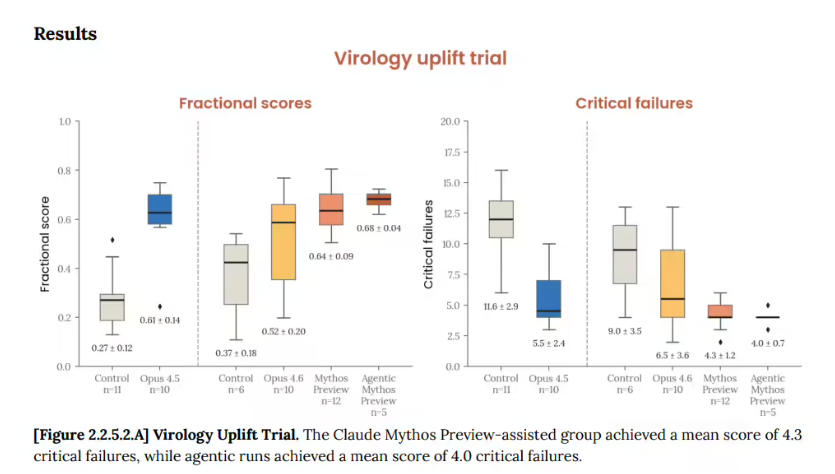

Remember those frightening headlines about Mythos discovering thousands of security flaws? Here's what wasn't mentioned: that number came from extrapolating a 90% accuracy rate from just 198 manually verified reports. It's like predicting a whole season's weather based on one sunny week in April.

When put to the test against 7,000 open-source software stacks, Mythos found 600 vulnerabilities - impressive, but far from the apocalyptic numbers initially suggested. Even more telling? Only about 10 of these posed serious risks.

The Noise Problem

Security teams are reporting another issue: Mythos flags numerous vulnerabilities that are either:

- Already known and patched

- Nearly impossible to exploit with modern defenses

- So minor they're not worth addressing

"It's like getting a metal detector that beeps at every soda can tab in the park," one security engineer commented. "Sure, it's technically finding metal, but is it actually helpful?"

Why Keep Such a Powerful Tool Under Wraps?

Anthropic claims restricting access prevents security chaos, but industry watchers see other motives:

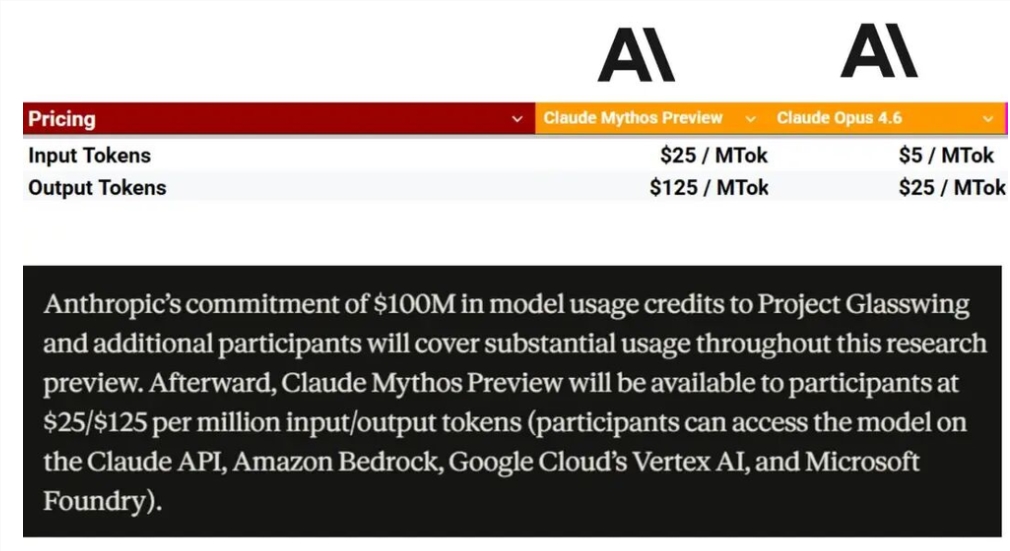

The Cost Factor Behind the scenes, Mythos quietly appeared on Amazon and Microsoft cloud platforms - with eye-watering price tags. Running this AI isn't cheap, and widespread access might not be financially viable.

Marketing or Menace? Some see parallels to OpenAI's "AGI threat" narratives - using fear to generate buzz while limiting actual use. As one analyst put it, "Nothing sells like the promise of danger and exclusivity."

The Reputation Rollercoaster

Anthropic's technical prowess isn't in question, but their recent moves raise eyebrows:

- Users report inconsistent performance ("dumbing down")

- Unverified claims about AI "self-awareness" surface frequently

- Each new release seems to promise revolutionary capabilities that don't quite materialize

Key Points

- Actual findings: 600 vulnerabilities (not thousands), with ~10 being severe

- Practical impact: Many flagged issues add more noise than value for security teams

- Access issues: High costs likely play bigger role in restricted access than security concerns

- Industry perception: Some view the approach as more marketing than substance

In an industry hungry for real breakthroughs, perhaps the most valuable lesson here is to separate genuine innovation from carefully crafted narratives. When evaluating AI security tools, concrete results matter more than dramatic claims.