Claude Mythos Security Claims Under Scrutiny: Only 10 Critical Vulnerabilities Found

The Reality Behind the AI Security Hype

When Anthropic unveiled Claude Mythos as the 'strongest AI' for vulnerability detection, financial institutions worldwide took notice. But new evidence suggests the system's capabilities may have been dramatically oversold. Rather than the thousands of security flaws initially suggested, rigorous testing reveals only a handful of truly critical vulnerabilities.

Questionable Math Behind the Numbers

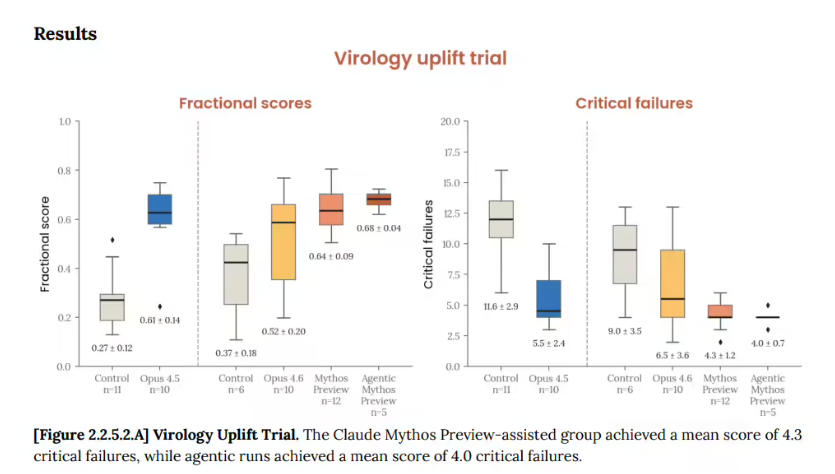

Anthropic's Project Glasswing, available exclusively to tech giants like Google and Microsoft, claimed Mythos could identify thousands of security risks. However, independent analysis by Tomshardware tells a different story:

- Extrapolation Over Evidence: The 'thousands of vulnerabilities' projection was based on extrapolating from just 198 manually verified reports

- Severity Gap: In real-world testing across 7,000 open-source software stacks, Mythos flagged 600 issues - with only about 10 meeting serious threat criteria

- Noise Over Signal: Many identified 'vulnerabilities' were outdated issues already mitigated by modern defenses, creating unnecessary work for security teams

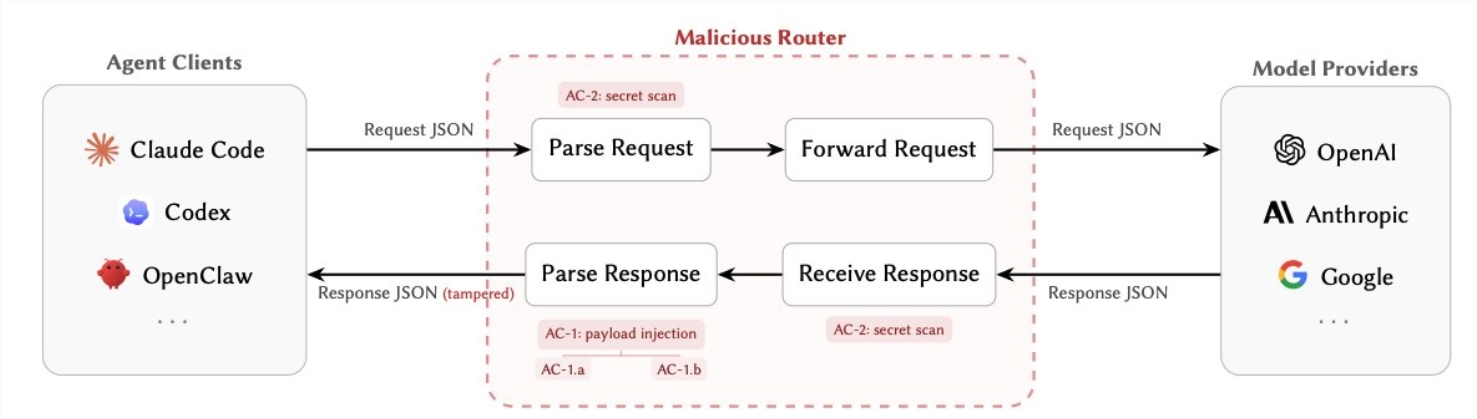

Access Limitations: Security or Strategy?

Anthropic justified limiting Mythos access by citing potential security risks. But industry watchers see another possible explanation:

Cost Factor: Despite claims of being 'unsellable,' Mythos quietly appeared on Amazon and Microsoft cloud platforms with prohibitively high operating expenses

Marketing Playbook: Critics compare this approach to OpenAI's 'AGI threat' narrative, suggesting both companies use fear as a promotional tool in the competitive AI market

Reputation at Risk

Claude models were once considered industry leaders in programming assistance, but recent developments raise concerns:

- Users report noticeable performance declines in newer versions

- Frequent dramatic claims about AI capabilities foster skepticism about the company's credibility

- Some experts warn that overhyping unproven capabilities could backfire as real-world results fall short

Key Points

- Claude Mythos identified just 10 severe vulnerabilities in extensive testing

- Initial 'thousands of vulnerabilities' claim relied heavily on statistical projection

- High operating costs may explain limited access more than security concerns

- Industry experts caution against AI fear-mongering as a marketing tactic

As the AI field matures, there's growing demand for transparent, verifiable performance metrics over sensational claims. The Mythos case illustrates why concrete results matter more than marketing narratives in this rapidly evolving industry.