AI Clash: Anthropic's Brief Ban on OpenClaw Founder Sparks Debate

AI Showdown: When Security Measures Spark Controversy

The AI world was buzzing last week when Anthropic briefly suspended the account of Peter Steinberger, founder of open-source project OpenClaw. What began as a routine security flag turned into a public relations headache for the AI giant, raising important questions about platform governance in the age of large language models.

The Incident That Shook the AI Community

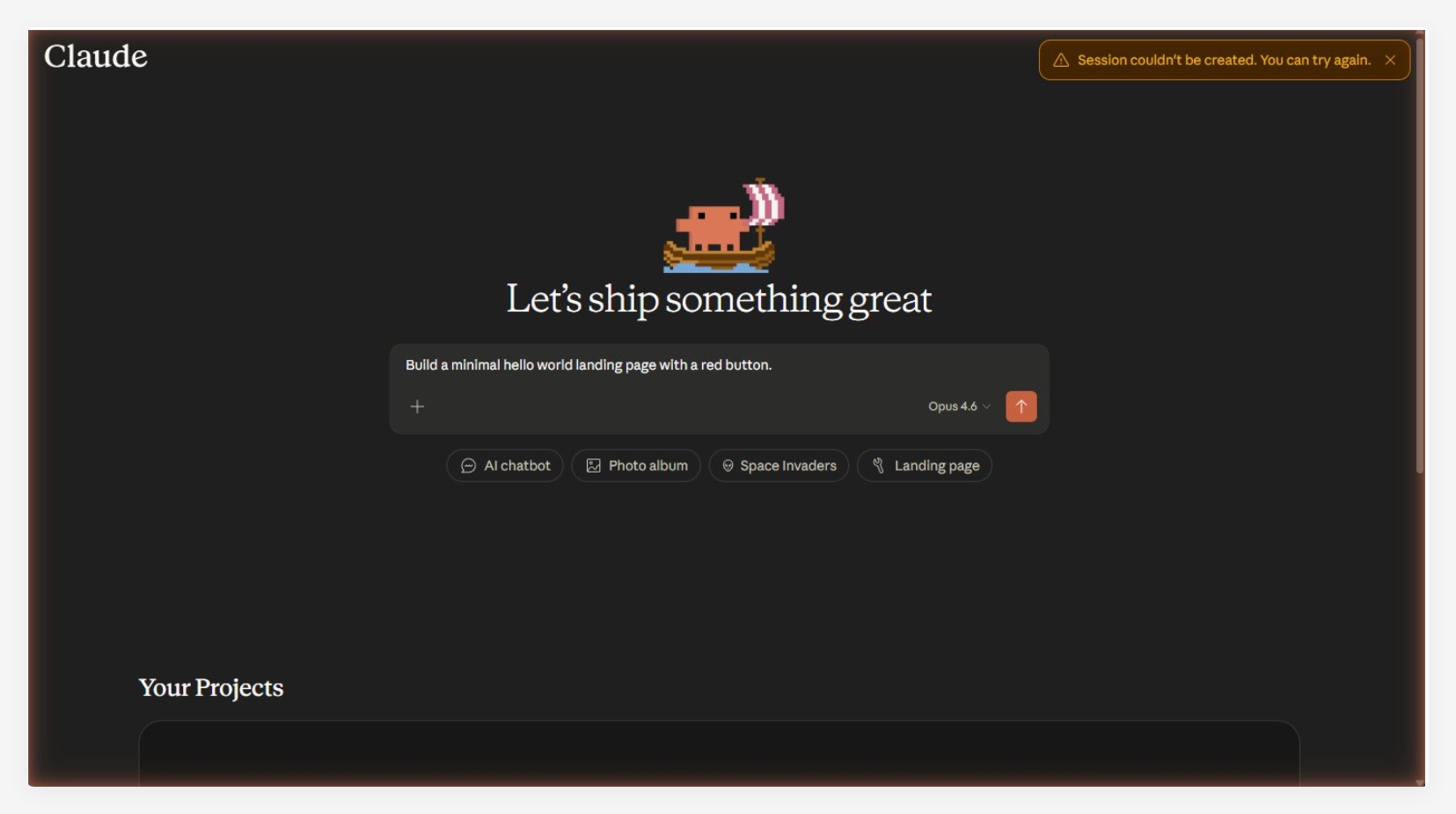

Steinberger took to social media platform X to share his frustration after receiving notice that his personal Claude account had been suspended due to "suspicious activity." The accompanying screenshot of Anthropic's email showed the company's security team citing potential policy violations - though without specifying which rules might have been broken.

"Imagine paying for a service, following all the rules, then getting locked out without explanation," Steinberger later told followers. His account was restored within two hours, but the damage to trust had been done.

Competing Interests Collide

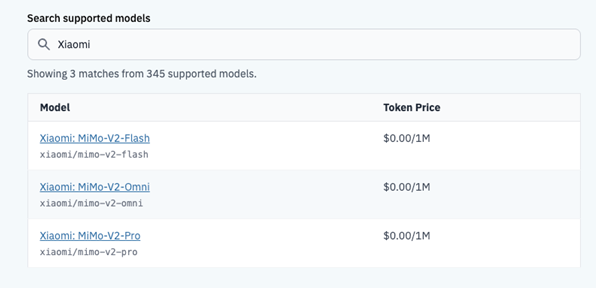

The situation grew more complicated when users pointed out Steinberger's position at OpenAI, Anthropic's main competitor. Some questioned why an OpenAI employee would need access to Claude's systems at all. Steinberger countered that he was simply doing comparative research as a paying customer - a common practice in the AI development community.

"We need to study multiple models to push the field forward," he argued. "This isn't about corporate loyalty - it's about advancing AI responsibly."

Behind the Scenes: Automated Systems vs Human Judgment

Anthropic engineer Thariq Shihipar later joined the conversation, suggesting the ban might have been triggered by an oversensitive abuse detection algorithm. "Our security systems sometimes err on the side of caution," he admitted, offering to personally assist Steinberger with any future access issues.

This explanation satisfied some observers but left others uneasy. If even prominent developers can get caught in automated security nets, what does that mean for smaller researchers without industry connections?

The Bigger Picture: Open Source in a Proprietary World

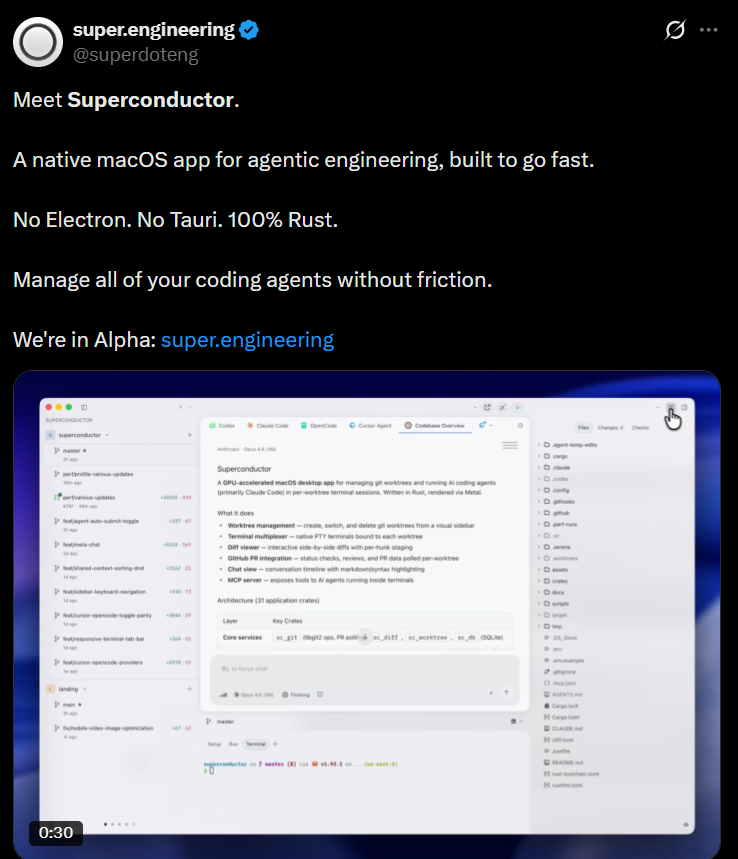

The brief confrontation highlights an ongoing tension in AI development. While companies like Anthropic invest billions in model development, open-source projects like OpenClaw attempt to democratize access to these technologies. When platform policies change or access gets restricted, these independent efforts often bear the brunt.

As one developer commented: "We're building the future of AI on platforms that can revoke our access at any time. That should worry everyone."

Key Points:

- Two-hour ban on OpenClaw founder's Anthropic account sparked industry debate

- Conflicting explanations emerged about the suspension's cause

- Open-source challenges in proprietary AI ecosystem come into focus

- Security automation versus developer access remains contentious issue

- Industry veteran suggests incident reflects growing pains in AI governance