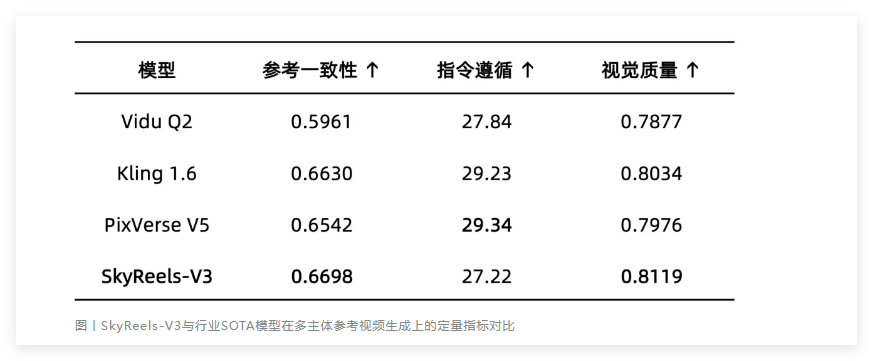

Ant Forest Releases Massive 2.7TB Depth Dataset for AI Vision

Ant Forest's Open-Source Move Could Transform Computer Vision

In a significant boost for AI research, Ant Lingbo Technology has released the LingBot-Depth-Dataset - a massive collection of depth perception data that's set to accelerate advancements in spatial AI. Clocking in at 2.71TB, this resource dwarfs previous offerings with its 3 million high-quality sample pairs, two-thirds of which come from real-world environments.

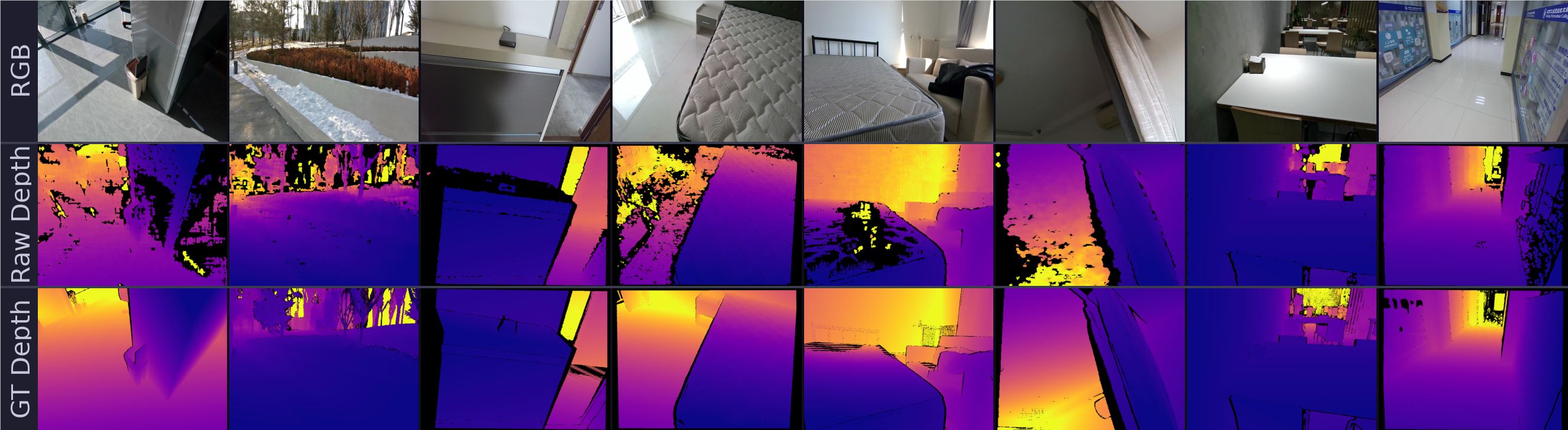

(Sample images from the LingBot-Depth-Dataset showing RGB images, raw sensor data, and processed depth maps. The dataset provides both raw and ground truth depth information for robust model training.)

Filling a Critical Gap in AI Research

For years, computer vision researchers have struggled with limited, synthetic-heavy datasets that poorly represent real-world conditions. "Most available datasets are like training swimmers in a kiddie pool," explains Dr. Wei Zhang, a computer vision researcher at Tsinghua University. "They simply don't prepare models for the messy complexity of actual environments."

The LingBot dataset changes this by offering:

- Real-world diversity: Captured across varied lighting and material conditions

- Hardware breadth: Supports six popular depth cameras including Orbbec and Intel RealSense models

- Complete data packages: Each sample includes RGB images plus both raw and processed depth maps

From Lab to Living Room: Practical Applications

The impact extends far beyond academic circles. Ant Lingbo's own LingBot-Depth model, trained on this dataset, already shows remarkable improvements:

- 70% better accuracy in indoor scene depth prediction compared to leading methods

- 47% error reduction in handling sparse or incomplete depth data

Perhaps most exciting is how this could democratize advanced computer vision. "With this dataset," notes robotics engineer Maria Chen, "even budget depth cameras can achieve performance rivaling premium industrial equipment - no hardware upgrades needed."

Why This Matters for AI's Future

As embodied AI systems move into homes and workplaces, their ability to understand physical spaces becomes crucial. This dataset provides the missing link between laboratory research and real-world deployment.

The open-source approach is particularly significant. By removing the barriers of expensive data collection, Ant Lingbo is enabling:

- Faster iteration for academic researchers

- More robust testing across different hardware platforms

- Accelerated development of practical applications

"We're not just sharing data," says Ant Lingbo's project lead. "We're helping build the foundation for the next generation of spatial computing."

Key Points:

- Scale: 2.71TB dataset with 3 million sample pairs (2M real-world)

- Versatility: Supports six major depth camera models

- Performance: Enables dramatic accuracy improvements in depth perception

- Accessibility: Open-source availability lowers barriers for researchers worldwide