AI Video Editing Just Got Easier: Create Stunning Social Media Clips in Minutes

The Rise of 'Vibe Editing': AI Simplifies Video Creation

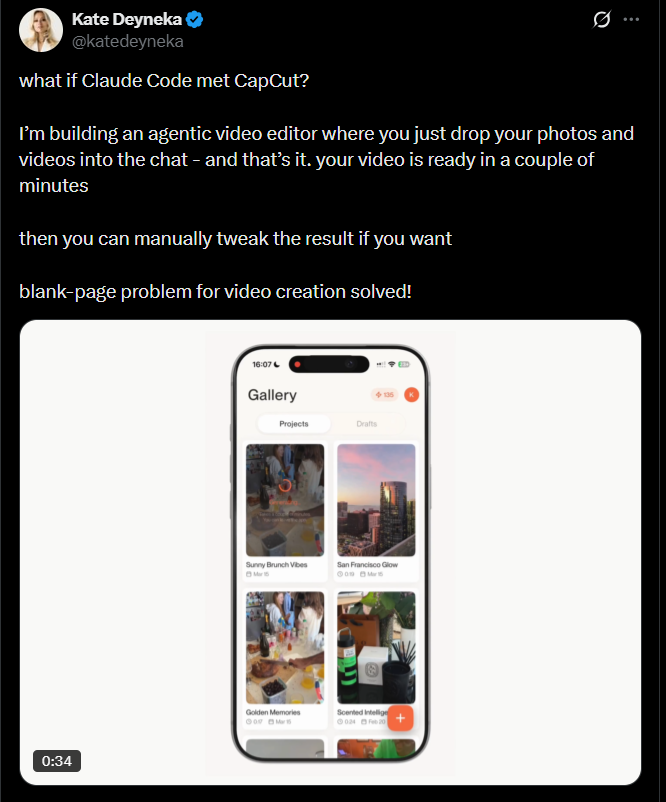

Remember when editing videos meant wrestling with complicated software? Those days might be ending thanks to a new generation of AI tools that understand not just technical commands, but creative intent. Dubbed 'vibe editing,' this approach lets anyone create polished social media videos through simple conversations with an AI assistant.

How It Works: Editing Through Conversation

The process couldn't be simpler:

- Upload your raw footage - vacation clips, party photos, or even live stream recordings

- Describe your vision in plain language ("Make a dreamy travel montage with lo-fi beats")

- Tweak as needed ("Slow the opening shots" or "Add retro filters")

- Export and share directly to your favorite platforms

"What used to take me hours now happens in minutes," reports one early adopter. "It's like having a professional editor who speaks my language."

Why Traditional Tools Fall Short

Professional editing software has always demanded technical skills most casual users don't have. Even simpler apps require learning interfaces and terminology. Meanwhile, our phones fill up with unused footage because we lack time or confidence to edit it.

Existing AI solutions often miss the mark too—they might auto-cut highlights or add captions, but fail to capture the emotional tone creators want. Vibe editing bridges this gap by focusing on mood rather than mechanics.

The Players Shaping This Space

Several platforms are leading the charge:

- Descript's AI Agent pioneered many vibe editing features now considered essential

- Newcomers like Topview and Mobbi AI offer browser-based solutions for marketing and social content

- Meta quietly launched Vibes last year, integrating advanced AI models for short-form video

- Independent developers continue pushing boundaries with open-source alternatives

The common thread? All recognize that understanding creative intent matters more than technical prowess in today's content landscape.

Key Points:

- Vibe editing uses natural language instead of complex interfaces

- AI handles color grading, music selection, pacing and transitions automatically

- Major platforms and indie tools alike are adopting this approach

- The technology particularly benefits casual creators and small businesses

- Future updates may allow even more nuanced creative control through conversation