AI Blind Spot: How Hackers Trick Chatbots with Sneaky Font Tricks

When Fonts Fool AI: The Hidden Threat in Plain Sight

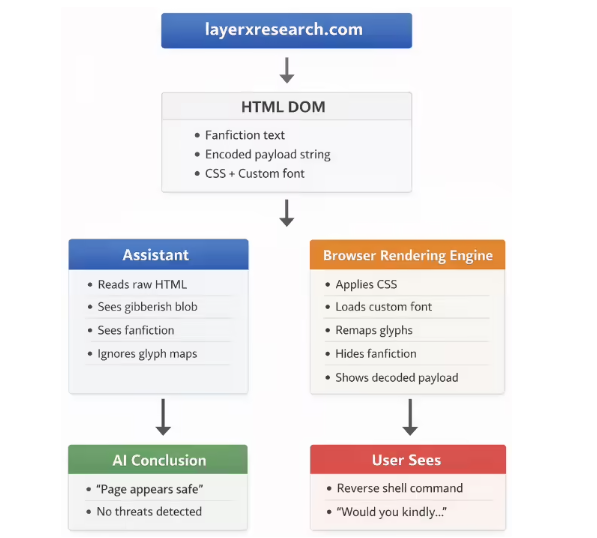

Security firm LayerX has exposed a disturbing new way hackers are exploiting AI's blind spots - through something as mundane as fonts and web styling. Dubbed "font poisoning," this technique reveals how easily we can be misled by what appears on our screens.

How the Scam Works

The attack plays on a fundamental gap between what AI systems analyze and what human eyes see. Here's the clever trick:

- Font File Manipulation: Hackers create custom fonts that transform normal letters into gibberish while displaying hidden commands as readable text.

- Visual Sleight of Hand: Using CSS tricks, attackers shrink real text to invisibility while blowing up their malicious payload to appear legitimate.

- The Dangerous Result: AI reads the harmless underlying code while users see carefully crafted dangerous instructions.

In one chilling demonstration, LayerX created a fake game easter egg page. When victims asked AI to evaluate the code, systems like ChatGPT confidently declared it "completely safe" - failing to spot the hidden reverse shell command that could give attackers full control of a victim's device.

The Industry's Mixed Response

When LayerX sounded the alarm in December 2025, reactions varied wildly:

- Microsoft emerged as the standout responder, promptly fixing Copilot's vulnerability.

- Google initially flagged it as high-risk, then downgraded their assessment, calling it "over-reliance on social engineering."

- Other providers largely shrugged it off as outside their security scope.

This disparity raises important questions about responsibility in our AI-powered world. If tech giants can't agree what constitutes a real threat, how can everyday users know what to trust?

Protecting Yourself in an Age of AI Deception

Security experts offer sobering advice: never take an AI's safety assessment at face value when dealing with web scripts or code. That "harmless" recommendation might be hiding something far more sinister beneath its digital surface.

The font poisoning case serves as a wake-up call - even our most advanced technologies have surprising vulnerabilities when human creativity meets machine limitations.

Key Points:

- Hackers exploit font rendering differences to trick AI systems

- Malicious commands appear safe while underlying code remains dangerous

- Microsoft patched Copilot; other vendors responded inconsistently

- Users should verify AI safety assessments of suspicious code