Your Phone Just Got Smarter: Gemini's New AI Can Now Do Tasks for You

The Dawn of Hands-Free Smartphone Assistance

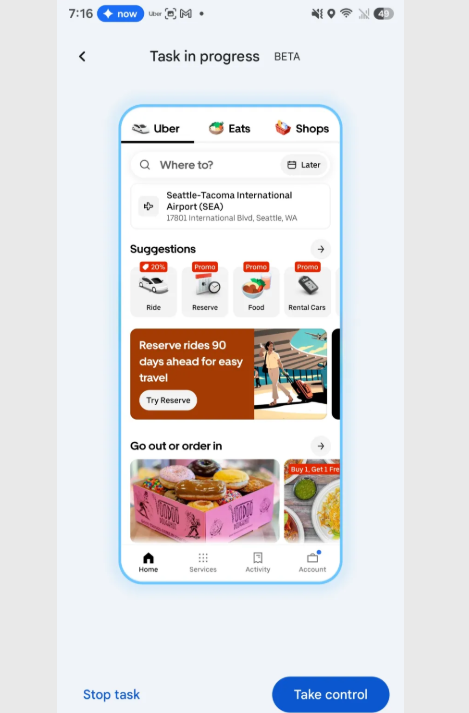

Imagine telling your phone "Order my usual coffee" and watching it navigate the Starbucks app just like you would. That future arrived this week as Google rolled out Gemini's groundbreaking task automation feature in beta testing. This isn't just another voice assistant upgrade - it's the first real step toward phones that act as true digital assistants.

How It Works: AI That Sees and Acts Like You

Unlike traditional app integrations that rely on backend APIs, Gemini's approach is remarkably human-like:

- Smart Ride Booking: Say "Get me a ride to JFK Terminal 4" and watch as your phone opens Uber, selects the correct terminal (asking if unsure), and pre-fills all the details

- Food Ordering Magic: Command "Order my morning latte and croissant" and the AI will scroll through menus, make selections, and even handle customizations - all while you watch every step

The system creates a virtual environment where it can interact with apps visually, learning interface patterns much like humans do through trial and error.

Safety First: You're Always in Control

Google has implemented crucial safeguards to prevent runaway automation:

Real-Time Oversight Every action appears in a transparent overlay, letting you monitor or interrupt the process at any point. It's like having training wheels on your AI assistant.

Final Checkpoints No payment goes through without your explicit approval. The system always stops at confirmation screens, requiring your manual tap before completing transactions.

Currently focused on delivery and transportation apps, this technology hints at a near future where phones transition from tools to proactive helpers. While early versions sometimes struggle with complex menu navigation (watching an AI scroll past your coffee order three times might test your patience), the potential is staggering.

The implications go beyond convenience - this could revolutionize accessibility for millions who struggle with touchscreen interfaces. As the technology matures, we're not just looking at smarter assistants, but potentially the first generation of devices that truly understand what we want done, not just what we're asking.

Key Points:

- Gemini's new automation feature performs actual tasks across apps through visual interaction

- Currently in beta testing with select ride-hailing and food delivery services

- Maintains human oversight with real-time monitoring and mandatory confirmation steps

- Represents a shift from voice-controlled search to true digital assistance

- Early versions show promise despite occasional navigation hiccups