xAI's Grok 4.20 Bets on Honesty Over Hype in AI Race

xAI Takes a Stand for Truth in AI

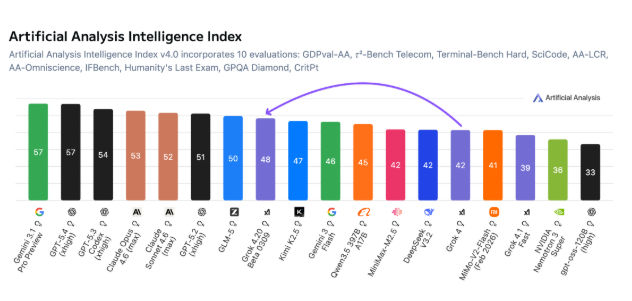

In an industry obsessed with benchmark scores, Elon Musk's xAI is making a bold bet on something more fundamental: trust. The newly released Grok 4.20 model may not top every performance chart, but it's setting new standards for honesty in artificial intelligence.

The Truthfulness Benchmark

Independent tests by Artificial Analysis reveal Grok 4.20's standout feature - its remarkably low hallucination rate. Scoring 78% on the 'non-hallucination' scale, it outperforms competitors where it matters most: reliability.

"What good is a brilliant answer if it's fiction?" asks xAI's lead researcher. "We're building AI that knows when to say 'I don't know' instead of making things up."

Three Ways to Work Smarter

xAI offers tailored solutions through three distinct API modes:

- Reasoning Mode: For deep analysis where accuracy trumps speed

- Standard Mode: Balanced performance for everyday tasks

- Multi-agent Mode: Complex problem-solving through team collaboration

The approach mirrors how humans adapt thinking styles to different challenges - a refreshing departure from one-size-fits-all AI solutions.

More Value, Lower Cost

Grok 4.20 delivers practical advantages beyond its truth-telling:

- Massive context window: Processes up to 2 million tokens - enough for entire books or codebases

- Competitive pricing: At $2-$6 per million tokens, it undercuts many rivals while offering more capability The model particularly shines in professional settings where factual errors carry real consequences - legal research, financial analysis, and technical documentation.

The Reliability Revolution?

As one industry analyst notes: "While others chase artificial general intelligence, xAI solves today's practical problems. Grok won't pretend to know everything - and that makes it uniquely valuable."

The launch signals a potential shift in AI priorities from raw power to dependable performance. For businesses tired of fact-checking their AI assistants, Grok 4.20 offers a compelling alternative.

Key Points:

- Record-low hallucination rate (78% non-hallucination in tests)

- Three specialized modes for different use cases

- 2 million token context handles large documents with ease

- Cost-effective pricing starts at $2/million tokens