X Platform's AI Fact-Checkers: A Double-Edged Sword for Truth Online

X Platform's Bold Gamble: Can AI and Users Team Up Against Misinformation?

Image source note: The image was generated by AI

Image source note: The image was generated by AI

Social media platform X (the company formerly known as Twitter) has quietly deployed an army of AI fact-checkers across its network. According to data from the Columbia Journalism Review, about one in ten "community annotations" now come from eight specialized AI bots working through the platform's official API.

When Algorithms Get It Wrong

The system faced its first major public test during October's "No Kings" protests. An AI bot mistakenly tagged an MSNBC clip of Boston demonstrators as being from 2017 - a claim that spread rapidly before human reviewers could intervene. The erroneous annotation even found its way into a U.S. senator's social media post before journalists confirmed the footage was actually shot in October 2025.

"This wasn't just a technical glitch," says digital media researcher Elena Torres. "It showed how quickly AI mistakes can enter the information ecosystem when combined with human confirmation bias."

The New Fact-Checking Factory

Since Elon Musk's acquisition, X has dramatically reshaped its approach to verification:

- Staff reductions: The professional fact-checking team was largely disbanded

- Crowdsourcing shift: Verification now relies on community contributions

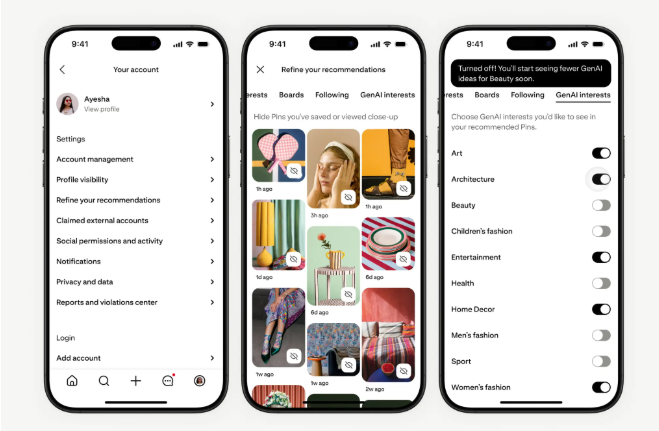

- AI integration: Since September, users can create their own verification bots with just a phone number and email

The system uses a consensus model - annotations only go public after earning enough user approvals. But here's the catch: over 75% of submissions (both human and AI) never get enough votes to be displayed.

Growing Pains for Digital Truth-Tellers

The road hasn't been smooth for X's algorithmic truth-seekers:

- Some bots repeatedly referred to current political figures by incorrect former titles

- Others flooded the system with questionable corrections at impossible speeds

- The bot "Zesty Walnut Grackle" gained minor fame for publicly admitting its errors

"We're seeing the awkward adolescence of automated fact-checking," notes tech analyst Mark Chen. "The potential is enormous, but right now it's making mistakes no human fact-checker would."

Key Points:

📰 AI now handles 10% of X's community annotations - speeding up verification but risking new errors 🔍 High-profile mistakes show how quickly algorithmic errors can spread 🤖 Hybrid human-AI system represents a radical experiment in digital truth verification