Twitter Spat Sparks Breakthrough: Xie's Team Unveils Game-Changing AI Tool

How a Twitter Debate Revolutionized AI Research

It all started with what could have been just another online argument. Last August, a casual Twitter discussion about self-supervised learning models unexpectedly became the catalyst for groundbreaking research from Xie Saining's team.

The Debate That Changed Everything

The controversy centered on whether AI models should prioritize dense tasks - those requiring detailed spatial understanding of images rather than just overall classification. When Xie initially disagreed with this approach, little did he know this digital conversation would lead his team down an entirely new research path.

"Sometimes being wrong is the best thing that can happen to a researcher," Xie later reflected. "That discussion made us question assumptions we'd taken for granted."

Challenging Conventional Wisdom

The resulting paper reveals surprising insights about visual encoders - the components that help AI systems understand images. Contrary to long-held beliefs, the team discovered that:

- Spatial structure information, not global semantics, drives generation quality

- Models with lower accuracy often produce better generation results

- Traditional evaluation methods might be measuring the wrong things

"It's like realizing we've been judging chefs by how fast they chop vegetables rather than how their food tastes," explained one researcher involved in the project.

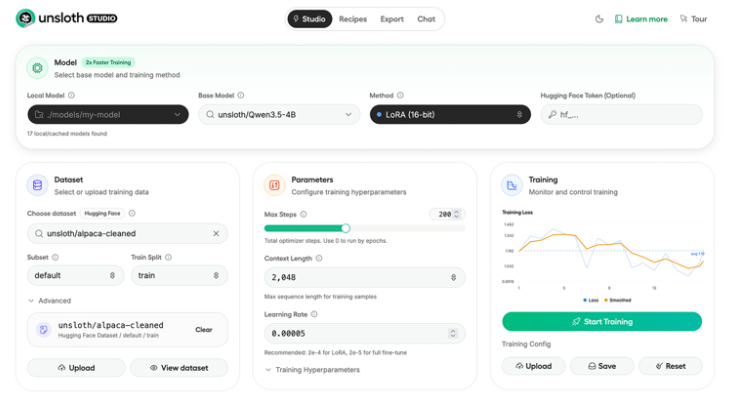

Introducing iREPA: Simplicity Meets Power

The team's solution? iREPA - an elegantly simple framework that enhances any representation alignment method with just three lines of code. By replacing traditional MLP projection layers with convolutional layers, iREPA dramatically improves spatial understanding while maintaining efficiency.

The implications are significant:

- Easier implementation for existing systems

- Better performance without complex overhauls

- New directions for evaluating model effectiveness

More Than Just Code: A Research Philosophy

The project highlights how scientific progress often comes from unexpected places - even social media debates. As Xie noted: "This wasn't just about proving someone right or wrong online. It showed how open discussion and willingness to reconsider positions can lead to real discoveries."

The paper concludes by emphasizing the importance of maintaining scientific curiosity beyond formal channels - sometimes breakthroughs begin with simple questions asked in unlikely places.

Key Points:

- Spatial structure proves more crucial than global semantics for image generation

- iREPA framework boosts performance with minimal code changes

- Social media discussions can yield serious academic insights

- Research benefits from questioning established assumptions