Thinking Machines Lab Achieves 100% Consistent AI Output

Thinking Machines Lab Solves AI Randomness Challenge

In a landmark achievement for artificial intelligence research, Thinking Machines Lab has successfully addressed one of the most persistent challenges in large language model development: output inconsistency. Founded by former OpenAI Chief Technology Officer Mira Murati, the lab announced this technological breakthrough in their recent research report.

The Problem of Non-Deterministic Output

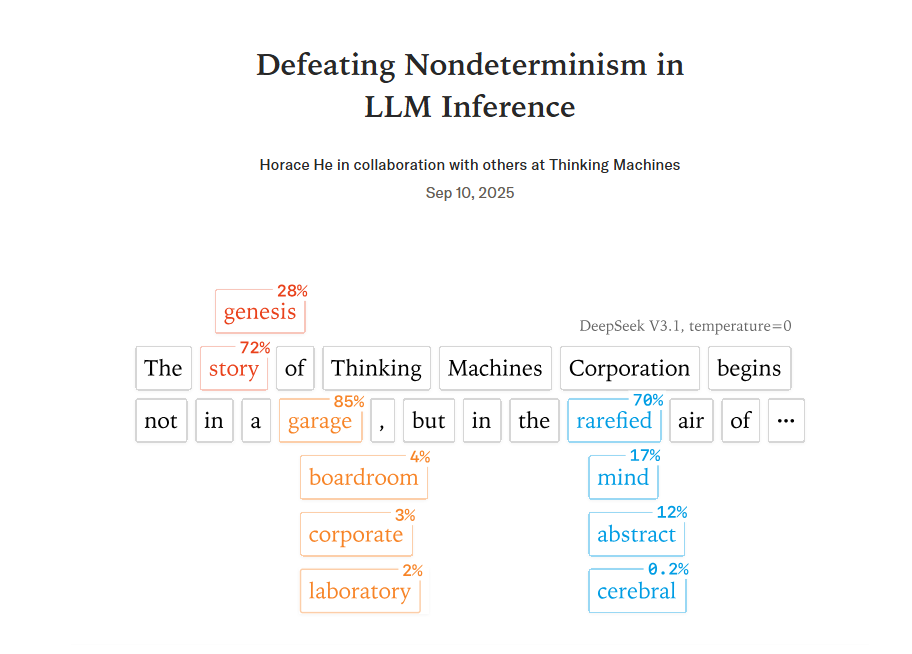

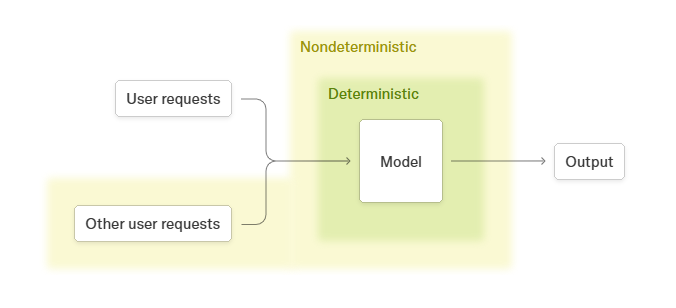

Even with temperature parameters set to zero, traditional LLMs have struggled with producing identical outputs for identical inputs. The research paper titled "Defeating Nondeterminism in LLM Inference" identifies two primary technical causes:

- Non-associativity of floating-point addition: In GPU parallel computing environments, slight variations occur in calculation sequences like (a + b) + c versus a + (b + c).

- Parallel computing strategy variations: Changes in batch sizes, sequence lengths, and KV cache states affect GPU kernel selection strategies.

The Technical Solution

The lab developed a batch-invariant solution that ensures:

- Consistent calculation order across different batch sizes

- Identical results regardless of sequence splits

- Optimized computational modules including RMSNorm and attention mechanisms

The team validated their approach using the Qwen3-235B-A22B-Instruct-2507 model (235 billion parameters). After 1,000 repeated tests, the model achieved unprecedented 100% output consistency.

Industry Impact

This breakthrough carries significant implications for:

- Financial risk assessment systems requiring absolute consistency

- Medical diagnosis applications where reliability is critical

- Legal document analysis needing predictable outputs The lab has made their findings publicly available, providing valuable insights for AI developers worldwide.

About Thinking Machines Lab

Established in 2023 with $2 billion in seed funding, the lab focuses on foundational AI technologies. They plan to launch their first commercial product in coming months.

The achievement marks an industry shift from pursuing sheer model scale to prioritizing application quality and reliability.

The full research report is available at: https://thinkingmachines.ai/blog/defeating-nondeterminism-in-llm-inference/

Key Points:

- Solved persistent issue of LLM output randomness

- Identified two primary technical causes

- Developed batch-invariant solution

- Achieved 100% consistency in testing

- Significant implications for enterprise applications