Tencent's New Robot Brain Outperforms Rivals in Key Tests

Tencent's Robotics Breakthrough: When AI Gets Physical

Tech giant Tencent just gave robots a serious brain upgrade. Their new HY-Embodied-0.5 model isn't another theoretical AI experiment - it's built specifically to help machines understand and navigate the messy, unpredictable real world.

Why This Matters Most AI today excels at digital tasks but falters when faced with physical environments. Ever watched a robot struggle to pick up a glass without spilling? That's the gap Tencent's team aims to close. "Current models see the world like a 2D photograph," explains lead researcher Dr. Wei Zhang. "We're giving them true 3D understanding and the ability to learn from physical interactions."

The Technical Edge

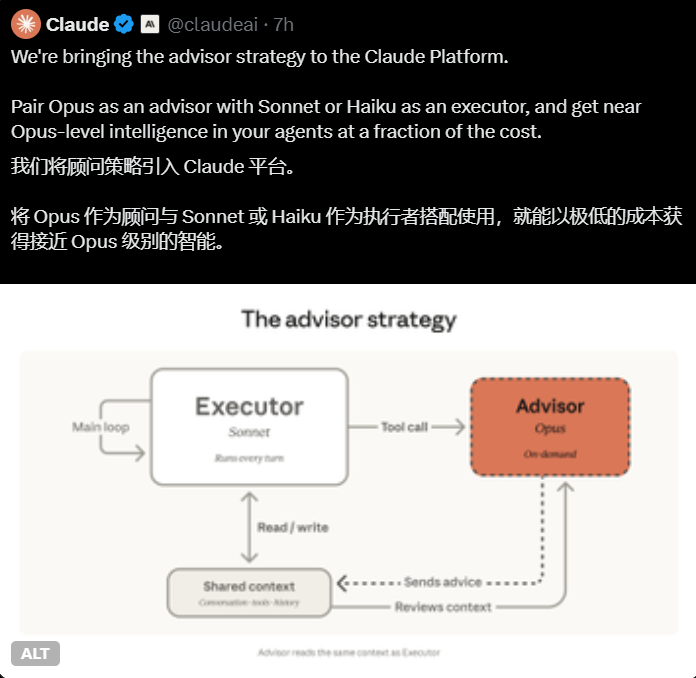

What sets this system apart is its dual-architecture approach:

- MoT-2B (4B parameters): The "fast thinker" optimized for real-time responses

- MoE-32B (407B parameters): The heavyweight for complex reasoning tasks

Rather than retrofitting existing AI, engineers rebuilt everything from the ground up. Their secret sauce? A novel training method using over 100 million real-world interaction scenarios - everything from stacking boxes to handling delicate objects.

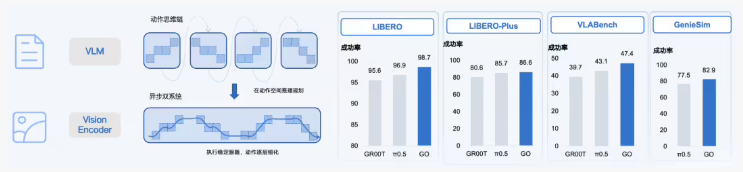

Performance That Impresses In competitive testing, the smaller MoT-2B model outperformed rivals like Qwen3-VL-4B in 16 out of 22 categories. The flagship version went toe-to-toe with Google's Gemini 3.0 Pro in overall capability. Practical demonstrations showed robots completing warehouse-style tasks with 30% fewer errors than current systems.

What's Next?

Early applications focus on industrial settings, but the implications stretch further. "This isn't just about better factory robots," notes robotics analyst Lisa Chen. "That same spatial intelligence could power everything from home assistants that actually tidy your room to search-and-rescue bots that navigate disaster zones."

Tencent plans to license the technology while continuing development. The next version, already in testing, aims to cut response times by another 40%.

Key Points:

- First AI model specifically designed for physical world interaction

- Outperformed competitors in 72% of benchmark tests

- Two specialized architectures handle different task types

- Training uses massive dataset of real-world interactions

- Could enable major advances in service and industrial robotics