Tencent's New OCR Model Breaks Records While Staying Lean

Tencent's Small But Mighty OCR Model Turns Heads

In an industry where bigger often means better, Tencent's Hunyuan research team took a different approach. Their newly open-sourced OCR (Optical Character Recognition) model packs state-of-the-art performance into just 1 billion parameters - modest by today's AI standards.

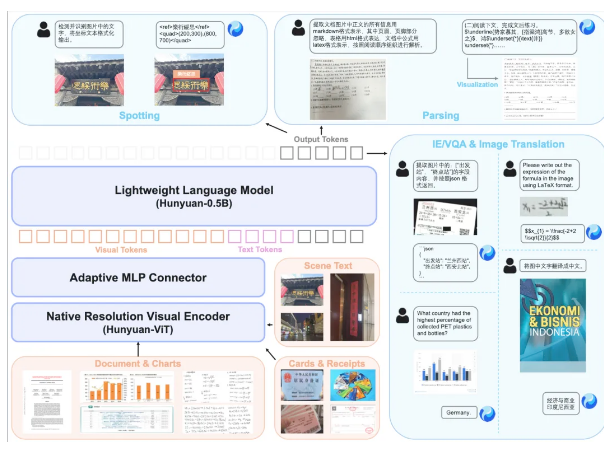

"What makes HunyuanOCR special isn't its size, but how much we've optimized its architecture," explains the technical documentation. The model combines three smart components: a video encoder that preserves original image quality, an adaptive visual processor, and Tencent's efficient language model.

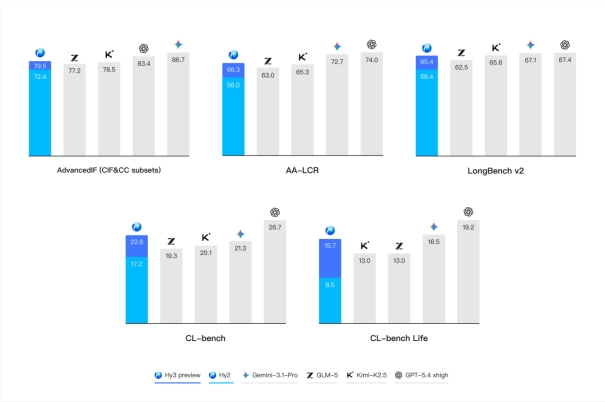

Performance That Surprises

The numbers tell an impressive story. On OmniDocBench's challenging document parsing test, HunyuanOCR scored 94.1 points - edging out Google's much larger Gemini3-Pro. It aced nine different real-world scenarios including:

- Handwritten note transcription

- Street sign recognition

- Complex document analysis

Perhaps most remarkably, it dominated the small-model category (<3B parameters) on OCRBench with an 860-point score - about as accurate as models three times its size.

More Than Just Text Reading

The model isn't limited to recognizing characters. It can:

- Extract data from tickets and forms directly into JSON format

- Pull bilingual subtitles automatically from videos

- Translate between Chinese/English and 14 less common languages

This multilingual capability recently earned it top honors at ICDAR2025's document translation competition.

Where You'll Find It Working Already

While the technology sounds futuristic, it's already handling practical jobs:

- Processing government ID documents

- Assisting video creators with automatic captioning

- Facilitating cross-border business communications

The team designed HunyuanOCR specifically for easy implementation. "Unlike complex systems requiring multiple processing steps," notes one developer, "this gives you clean results in one pass."

The model is now available through GitHub and Hugging Face, with demo versions accessible directly through web browsers.

Key Points:

- Compact Powerhouse: At just 1B parameters, outperforms larger competitors

- Real-World Ready: Excels at documents, handwriting, street signs and more

- Multilingual Master: Handles translation between 16 languages including English/Chinese

- Easy Integration: Simplified architecture means faster deployment