Tencent's New AI Model Gives Robots Human-Like Spatial Awareness

Tencent Breakthrough Brings Robots Closer to Human-Like Understanding

In a significant leap for robotics, Tencent's research teams have developed an AI model that finally gives machines something we take for granted - intuitive understanding of physical space. Their new HY-Embodied-0.5 system represents more than just another algorithm update; it's a fundamental rethinking of how artificial intelligence interacts with the three-dimensional world.

Why This Matters

Most AI vision systems today are like tourists reading a foreign city map - they recognize landmarks but struggle with depth and spatial relationships. Tencent's solution acts more like a local resident, instinctively knowing how objects relate in space and how to manipulate them. This capability gap has long prevented AI from moving beyond screens into practical robotics applications.

"Typical vision-language models are great at identifying objects in photos," explains a Tencent researcher, "but ask them to guide a robot's hand to pick up and organize those objects, and they falter. Our new architecture changes that equation."

Under the Hood

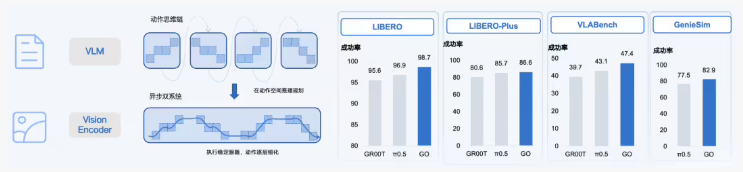

The team didn't just tweak existing models - they built from the ground up with two specialized versions:

- MoT-2B: A lean, efficient model (4B total parameters) designed for real-time response in edge devices

- MoE-32B: A powerhouse variant (407B parameters) offering superior reasoning for complex tasks

Key innovations include a novel hybrid Transformer architecture that prevents the "catastrophic forgetting" problem common in multimodal training, plus advanced visual encoding techniques that maintain fine detail crucial for physical interaction.

Performance That Speaks Volumes

Independent testing shows remarkable results:

- Outperformed 16 of 22 benchmark tests against similar-sized models

- Matched or exceeded capabilities of industry leaders like Gemini3.0Pro

- Demonstrated superior performance in practical robot control scenarios

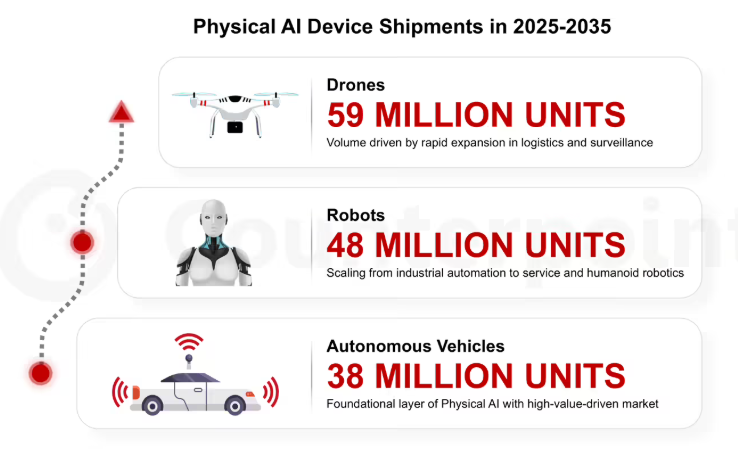

In warehouse simulations, robots using HY-Embodied-0.5 showed 30% fewer errors in stacking irregular objects compared to standard systems. The implications extend far beyond lab environments - imagine home assistants that can actually tidy your kitchen, or manufacturing robots that adapt to unpredictable item placements.

The Road Ahead

While still in its early stages (the 0.5 version number suggests more to come), this technology represents a crucial step toward truly embodied AI. As Tencent continues refining the system, we may soon see robots that don't just "see" the world, but understand and interact with it in ways that finally approach human fluidity.

Key Points

- Specialized architecture overcomes limitations of general vision models

- Two configurations balance speed and power for different applications

- Real-world performance exceeds current benchmarks

- Practical applications range from logistics to domestic robotics

- Future versions expected to further close gap with human spatial reasoning